Your OCR system reports 98% accuracy. Your downstream systems tell a different story. The invoices that “processed successfully” have mismatched line items. The contracts that “extracted cleanly” lost their table relationships. The vendor onboarding forms that passed all automated checks still require a team member to manually verify 30% of the fields.

This isn’t a tuning problem. A 2026 Rossum survey of 450 finance leaders found that 54.2% of teams still rely on legacy OCR, even though they know it underperforms. Not because they haven’t tried to fix it, but because the failures they’re experiencing are architectural, not configurable.

This article is for the practitioner who’s already running OCR in production and hitting the wall. Not the one evaluating document processing for the first time, but the one who has tuned, patched, and worked around a system that keeps failing in ways that are hard to explain to stakeholders.

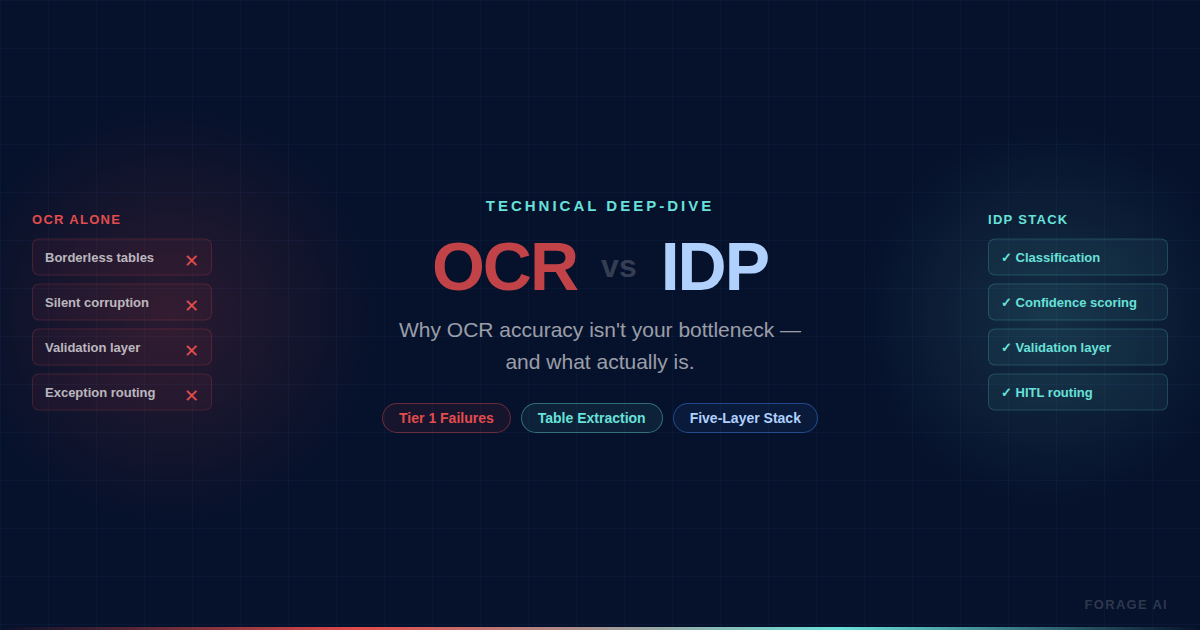

What follows is a diagnostic framework covering where OCR fails by severity, why tables are the breaking point most teams hit first, when OCR is genuinely sufficient versus when you need Intelligent Document Processing (IDP), and what IDP actually adds at the architectural level. The goal isn’t to sell you on a switch. It’s to give you the language to diagnose what’s actually broken and make confident decisions.

Quick digest:

- Where does OCR actually stop working, and why does tuning not help?

- What’s the real cost of “good enough” OCR accuracy?

- When should you stay with OCR, and when have you outgrown it?

- What does IDP add beyond better character recognition?

What OCR Actually Does (and Where It Stops)

Optical Character Recognition (OCR) converts images of text like scanned documents, photos or PDFs into machine-readable, searchable and editable digital text. On clean, typed documents with consistent layouts, including digitizing archives, making PDFs searchable, and extracting text from standardized tax forms, it is effective, and those are legitimate use cases.

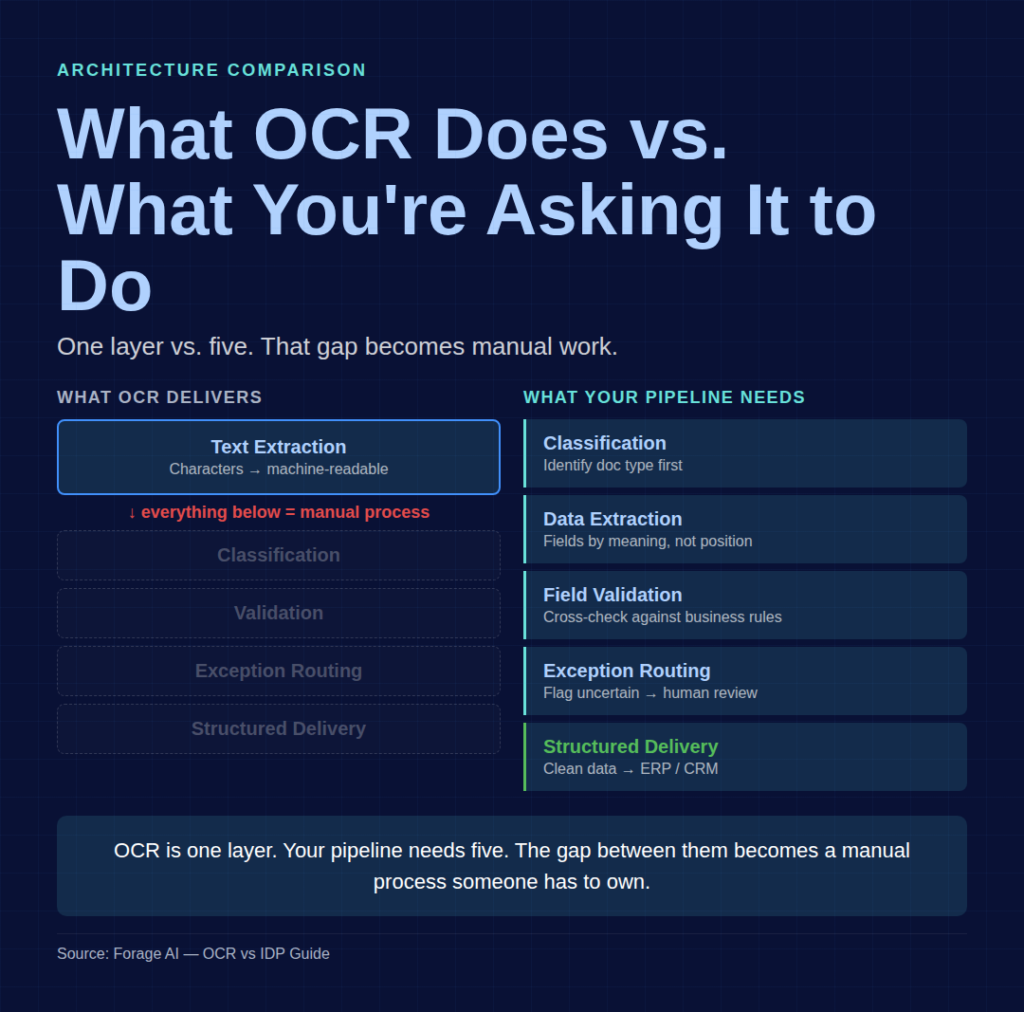

The problem starts when teams treat OCR as a complete document processing solution rather than a single layer in a larger pipeline. That distinction matters because everything OCR cannot do becomes a manual process that someone has to manage.

OCR operates on a template-based extraction model, meaning you define fixed zones on the document (specific pixel regions where fields like “invoice number” or “vendor name” are expected to appear), write rules to validate the output, and as long as every document follows the same layout, the system runs. Under lab conditions, printed text OCR achieves 99%+ character accuracy, and that number is real but also misleading.

Character accuracy is not the same as document accuracy, and the gap compounds fast. At 95% character accuracy on a 2,500-character invoice, you are looking at 125 characters that need manual correction per invoice, and at $2.56 in labor per correction over 10,000 invoices a year, that is $25,600 in correction costs alone, before you account for the downstream errors that slip through undetected.

OCR extracts characters but does not classify documents, validate field relationships, flag uncertain extractions, or learn from corrections. When your documents are structured and stable that is fine, but when they are not, you are building manual processes around an architectural gap that tuning alone will never close.

Where OCR Breaks: A Production Failure Taxonomy

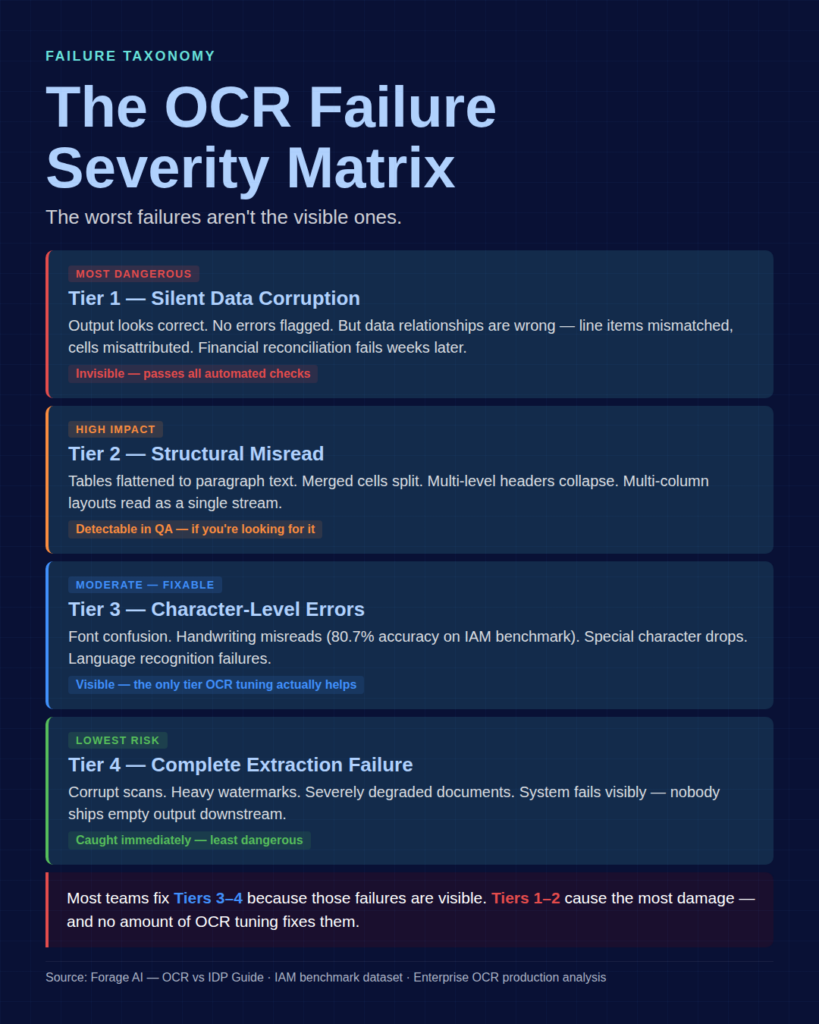

Most articles about OCR limitations give you a flat list of poor image quality, handwriting issues, and complex layouts, but that framing is not useful for diagnosing production failures. What matters is severity, because the worst OCR failures are not the ones that throw errors and get caught. They are the ones that pass through silently.

Tier 1: Silent Data Corruption (Most Dangerous)

The output looks correct, no errors are flagged, and the extraction completes successfully, but the data relationships are wrong.

A multi-column invoice gets read left-to-right as a single stream. Line item descriptions end up matched to the wrong quantities. The table header shifts one column to the right, and every cell below it is misattributed. The system processes it, your pipeline ingests it, and nobody catches it until a financial reconciliation fails three weeks later.

This is the failure tier OCR tuning cannot fix, because the system does not know it is wrong. Over 50% of OCR-extracted data still requires manual checking in enterprise environments, and the primary reason is not character-level errors but structural misreads that produce plausible-looking yet incorrect output.

Tier 2: Structural Misread (High Impact)

The system partially understands the document but misinterprets its structure. Tables get flattened into paragraph text. Merged cells split into separate entries. Multi-level headers collapse into a single row, losing the parent-child relationships between column groups. Multi-column layouts get jumbled into a single text stream.

Unlike silent corruption, structural misreads are often detectable during quality assurance (QA) reviews if you are looking for them, but most OCR pipelines lack structural validation. They check whether text was extracted, not whether the relationships between fields were preserved.

Tier 3: Character-Level Errors (Moderate Impact)

This is what most teams think of when they think “OCR failure.” Font confusion. Handwriting misreads, where accuracy drops to 80.7% on the IAM benchmark dataset, and cursive hits roughly 88% word accuracy at best. Special character drops. Language-specific recognition failures.

This is the only tier where traditional OCR tuning helps, through better preprocessing, higher-resolution scans, and domain-specific training data. If your failures are primarily Tier 3, investing in your OCR pipeline is the right call and you probably do not need IDP.

Tier 4: Complete Extraction Failure (Lowest Risk)

Corrupt scans, heavy watermarks, unusual file formats, and severely degraded documents cause the system to fail visibly. These are the least dangerous failures because they get caught immediately and nobody ships empty or garbled output downstream without noticing.

The critical insight: Most teams focus their OCR improvement efforts on Tiers 3 and 4 because those failures are visible. But Tiers 1 and 2 cause the most damage precisely because they’re invisible. If your document pipeline struggles and you can’t figure out why your downstream data quality is degrading, you’re likely dealing with Tier 1 or Tier 2 failures that no amount of OCR configuration will resolve.

[IMAGE: The OCR Failure Severity Matrix, a 4-tier visual showing failure types mapped against document types (tables, forms, handwritten, multi-format) with severity indicators]

Production experience reinforces this pattern. Practitioners report that leading OCR tools tested on multilingual invoices destroyed table formatting while performing well on clean test documents, and one widely-used cloud OCR service reports an average accuracy of 99.3% but scores 0% on individual problem images. Aggregate accuracy numbers hide the catastrophic outliers that actually break your pipeline.

There is also what practitioners call the “Day 11” pattern, where a system works well during testing and early production then gradually degrades as edge cases accumulate. Format changes, new vendor layouts, and evolving document styles each add a small failure rate that compounds silently over weeks, and these compounding failures are almost always Tier 1 or Tier 2 because the system does not throw errors. It just quietly gets things wrong.

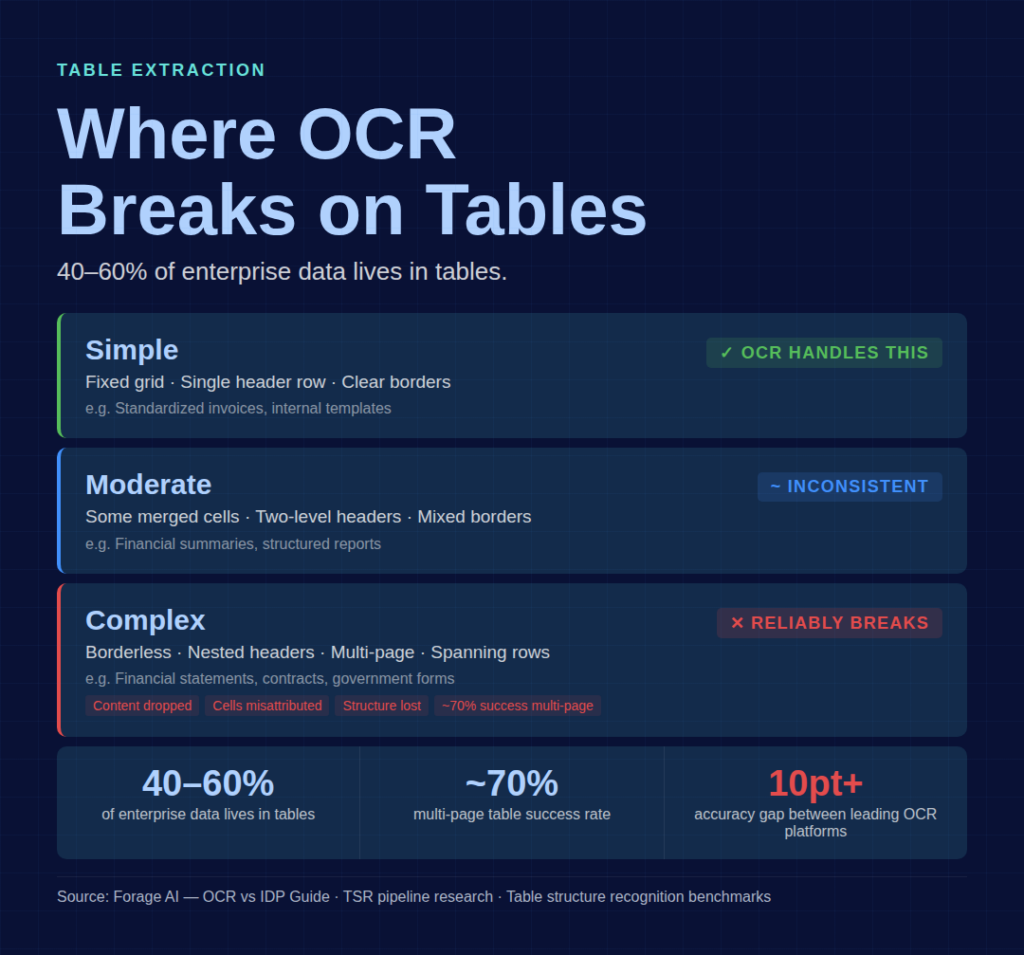

Why Tables Are Where OCR Dies

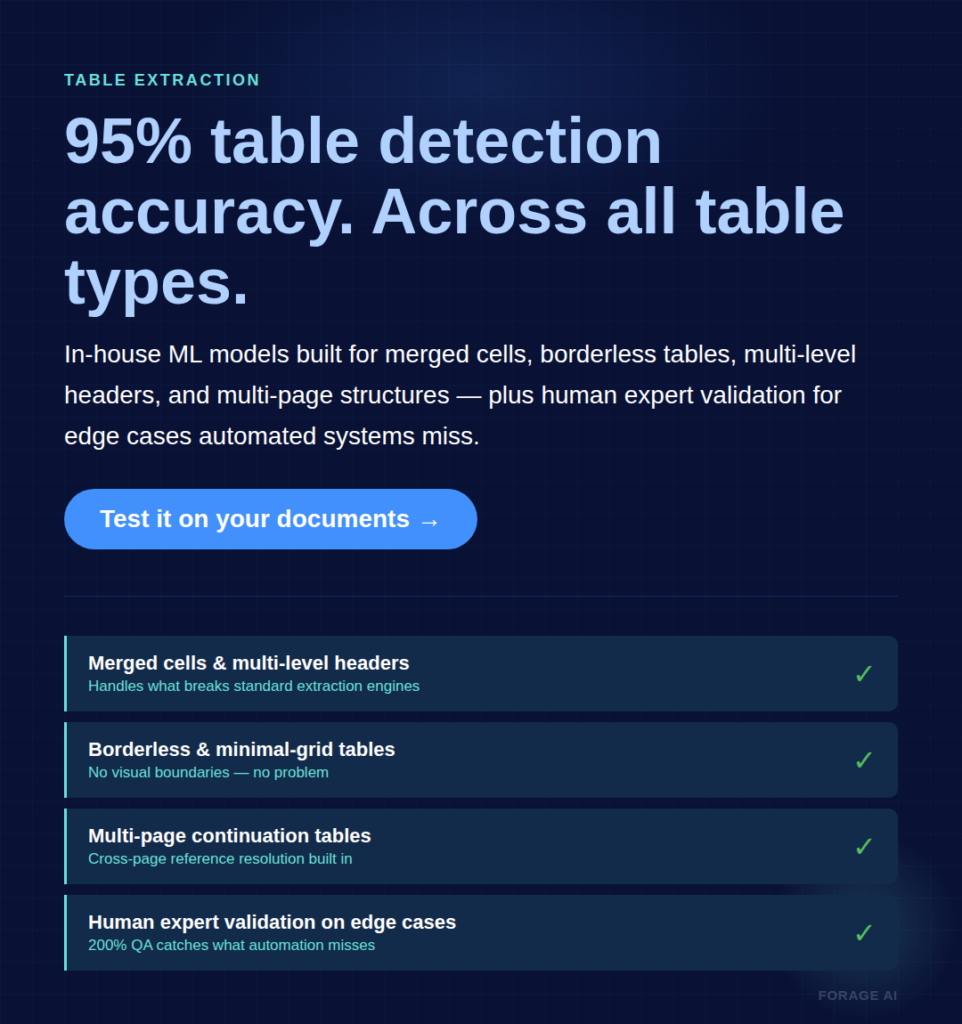

If you have run OCR on production documents for any length of time, you already know where the system breaks first. Tables. Between 40% and 60% of enterprise information lives in tables, and it is in tables that the gap between character recognition and document understanding becomes impossible to ignore.

OCR reads documents line by line, left to right, but tables require two-dimensional spatial reasoning, meaning the system must understand which cell belongs to which row and column, how headers relate to data beneath them, and where one table ends and another begins. That is a fundamentally different problem than character recognition, and it is one OCR was not designed to solve.ion.

Specific table structures each break OCR in their own way.

Merged cells. When cells span multiple columns or rows, OCR either splits the content across the cells it expects to find or omits it entirely.Even cloud-native enterprise OCR tools struggle with this. Users consistently report content being incorrectly split or dropped altogether.

Multi-level headers. A table with two or three header rows, where top-level categories span multiple sub-columns, requires understanding nested relationships. OCR typically recognizes spans on the first header row, but stumbles when the second row uses lighter borders. Three-row headers with nested groups are returned flattened, with incorrect span relationships.

Borderless tables. No visual grid means OCR can’t detect the table structure. The content gets extracted as flowing text with no cell boundaries preserved. Financial statements, research reports, and many government forms extensively use borderless tables.

Multi-page tables compound all of these problems. A table that starts on page 3 and continues on page 5, with a different table on page 4, has an approximately 70% success rate with current tools, and the remaining 30% require manual reconstruction.

Table complexity grading helps clarify where the risk sits.

- Simple (fixed grid, single header row): within OCR’s capability

- Moderate (some merged cells, two-level headers): inconsistent results

- Complex (borderless, nested, multi-page, spanning rows): reliably breaks OCR.

If your document mix includes moderate-to-complex tables, that is the clearest signal you have outgrown OCR, and the next section gives you the full criteria to confirm it.

The accuracy gap is significant and measurable, with testing showing more than 10 percentage point differences between leading platforms on identical table documents. Research into tablestructure recognition (TSR) pipelines confirms that integrating structure detection with OCR remains a major technical challenge, particularly for cells that span multiple rows.

This is why table detection accuracy, not character accuracy, matters for complex document processing. Forage AI’s IDP service achieves 95% table detection accuracy across all table types using in-house ML models that go beyond traditional grid structures, combined with human expert validation for the edge cases that automated systems miss.

When to Stay with OCR and When You Have Outgrown It

Not every document pipeline needs IDP, and overengineering your extraction stack wastes money while adding unnecessary operational complexity. The decision comes down to your document types, your volume, and the kind of accuracy your downstream systems actually require.

Stay with OCR if:

- Your documents use fixed layouts. Tax forms, standardized applications, and internal templates are examples of formats that never change.

- You process fewer than 5 document types with stable structures.

- Your accuracy requirements are at the character level, not the field level. You need searchable PDFs, not structured data extraction.

- Volume is low enough that manual QC catches the errors OCR misses. If one person can spot-check every output, you don’t have a scale problem yet.

You’ve outgrown OCR when:

- Document formats vary across vendors, clients, or sources. If you’re managing 50+ templates and spending engineering time adding new ones every month, that’s a template maintenance burden that compounds.

- Table extraction is required. If your accuracy depends on preserving cell-to-header relationships, OCR’s architectural ceiling applies.

- You need field-level accuracy, not just text extraction. “The document says $12,500” is not the same as “the total_amount field for vendor_id 4392 is $12,500.”

- Volume makes manual QC unsustainable. At 10,000+ documents per month, manually verifying even 10% of outputs becomes a full-time role.

- You’re processing semi-structured or unstructured documents (contracts, correspondence, claims) where layouts are unpredictable.

- Compliance requires audit trails. Regulated industries need to prove extraction accuracy, not just assert it.

[IMAGE: The OCR-to-IDP Decision Tree, a flowchart starting with document variability, branching through table complexity, volume, accuracy requirements, and compliance needs]

A few caveats are worth noting before you decide.Approximately 40% of IDP implementations underperform their initial return-on-investment projections, and the cause is typically integration failures rather than technological limitations. Switching to IDP is not a guarantee, and it adds implementation complexity, team skill requirements, and ongoing optimization needs.

The most important step before making any decision is to diagnose your actual failure tier. If you are dealing with Tier 3 failures at the character level, fix your OCR pipeline. If the failures are Tier 1 or Tier 2, meaning silent corruption or structural misreads, the problem is architectural, and IDP addresses it.

The AIIM/Deep Analysis survey of 600 large organizations found that 65% of enterprises are actively accelerating IDP initiatives, with two-thirds focused on replacing legacy systems. The biggest reported benefit was reduced processing time (50%), not headcount reduction (30%). It’s a productivity shift, not a labor replacement.

What IDP Actually Adds Beyond Better OCR

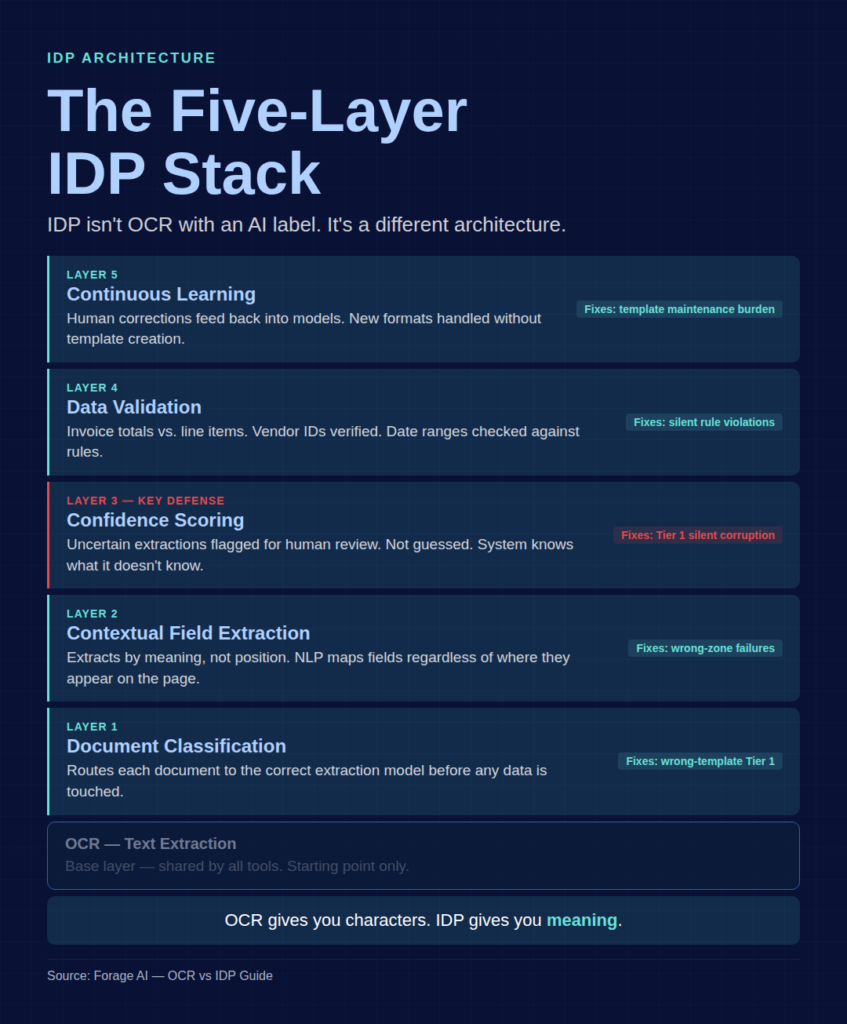

IDP is not “OCR with an AI label.” It’s a different architecture. OCR is one layer: text extraction. IDP adds five layers above it, each addressing a specific failure mode from the taxonomy above.

Layer 1: Document Classification. Before extracting anything, the system identifies the document type and routes it to the appropriate extraction model, preventing the wrong-template problem that causes many Tier 1 failures. Where OCR applies the same rules to every document, IDP classifies first.

Layer 2: Contextual Field Extraction. Instead of extracting text by position (zone-based), IDP extracts by meaning. It understands that “$12,500” in the bottom-right of an invoice is a total, not a line item. This is what Natural Language Processing (NLP) and machine learning models contribute: mapping extracted text to semantic fields regardless of where those fields appear on the page.

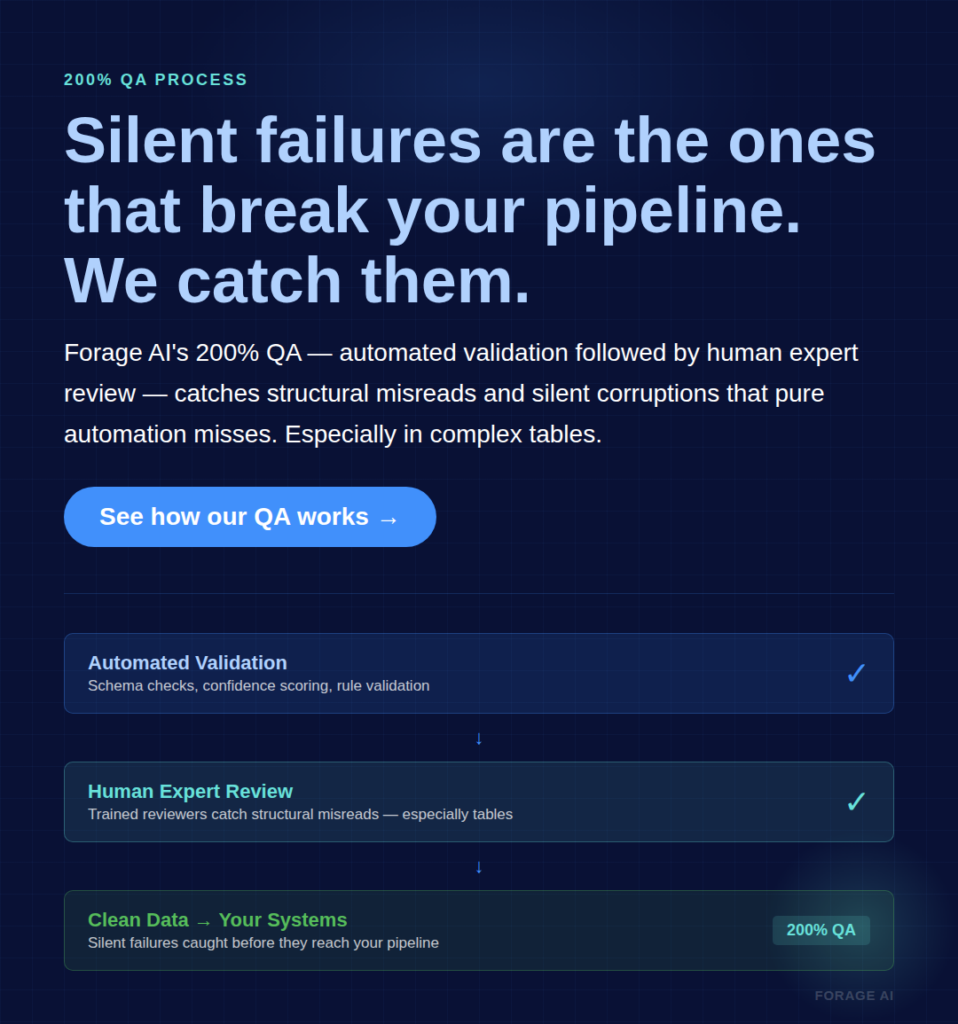

Layer 3: Confidence Scoring. When the extraction is uncertain, IDP flags it rather than guessing. Every extracted field gets a confidence score. Fields below the threshold route to human review instead of passing through as assumed-correct data. This is the primary defense against silent data corruption. The system knows what it doesn’t know.

Layer 4: Data Validation. Extracted data gets checked against business rules — does the invoice total match the sum of line items, is the vendor ID in the system, does the date fall within the expected range? OCR gives you raw text; IDP gives you validated, cross-referenced data.

Layer 5: Continuous Learning. Human corrections feed back into the models. New document formats don’t require manual template creation; the system learns from examples. This addresses the template maintenance burden that scales poorly with OCR.

[IMAGE: The Five-Layer IDP Architecture, a stacked diagram showing OCR as the base layer with classification, contextual extraction, confidence scoring, validation, and learning layers above it]

IDP isn’t magic, though.Even optimized IDP pipelines still send 15-30% of documents to human review. Confidence thresholds drift over time as document populations change. Models can produce incorrect output on low-confidence pages rather than flagging uncertainty. At extreme volume, the human-in-the-loop (HITL) pathway itself becomes a bottleneck.

The most reliable approach combines machine extraction with systematic human QA. Forage AI’s IDP service uses a Human+AI hybrid model with what we call a 200% QA process: every extraction passes through automated validation followed by human expert review. The QA team is three times the industry average size relative to the delivery team. This catches silent failures that pure automation misses, particularly in complex tables and high-stakes documents where a single field error can have material consequences.

The results across the broader market reflect the architectural advantage. IDP reduces error rates by over 52% compared to OCR-only pipelines.Metro AG reduced invoice turnaround from 1-2 days to one hour. Top-performing IDP achieves 95%+ straight-through processing (STP) rates, meaning documents flow from intake to output with no human intervention.

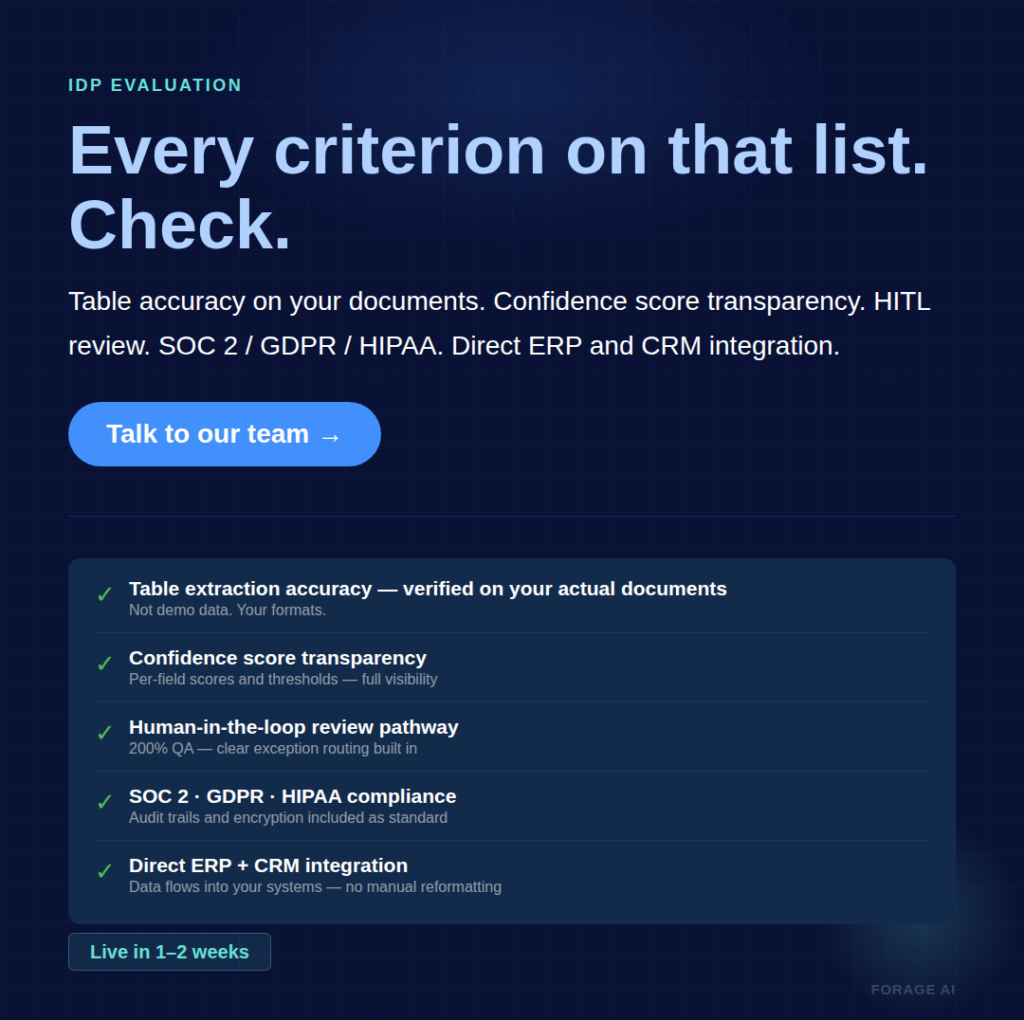

Evaluating IDP for Your Document Pipeline

If you’ve determined that your failures are architectural (Tier 1 or 2) and your document mix demands more than OCR can deliver, here’s how to evaluate IDP solutions. Weigh these criteria by your specific failure profile.

Table extraction accuracy. This is the metric. Not character accuracy, not “overall accuracy,” not accuracy on a demo dataset. Ask for table extraction results on your actual documents, including merged cells, multi-level headers, and borderless formats. If a vendor can’t break this out, their “99% accuracy” number is meaningless for your use case.

Variable format handling. How many templates does the system need? How does it handle a completely new document layout that it’s never seen? Template-based IDP is just OCR with more templates. True IDP handles new formats through classification and contextual extraction, not manual template creation.

Confidence score transparency. Can you see why the system flagged a specific extraction? Can you set per-field confidence thresholds? Opaque confidence scoring means you can’t tune the system for your specific accuracy requirements.

HITL workflow design. How does the exception handling path work? What percentage of documents are routed to human review? How long does the review queue take to process? A system with 98% accuracy but no clear exception path is worse than one with 92% accuracy and a well-designed human review routing.

Compliance certifications. For regulated industries, you need provable accuracy with audit trails. SOC 2, GDPR, and HIPAA compliance aren’t optional features. They’re baseline requirements. Forage AI’s IDP service supports all three, along with built-in validation, audit trails, and encryption. Document handling at scale also means supporting the full range of enterprise formats: PDF, DOC, EML, MSG, JPG, TIFF, including handwritten notes and documents exceeding 2,000 pages.

Integration depth. How does the extracted data reach your systems? Direct ERP and CRM integration, webhooks, API access? The extraction is only valuable if it flows into your workflow without manual reformatting.

Implementation timeline. Traditional enterprise IDP deployments take 6-12 months. Modern AI-native platforms can be deployed in 1-2 weeks. Building from APIs takes 3-6 months. Your timeline tolerance affects which solution category is a good fit.

Red flags in IDP evaluation:

- Accuracy claims without a document type breakdown

- No confidence score visibility

- No human review pathway

- Only demonstrated on clean, structured test documents.

- No audit trail or compliance documentation

- Pricing that doesn’t scale with your volume trajectory

As Caroline Krebs, VP of Finance at Rossum, puts it: “One material mistake can erase years of credibility. Finance cannot rely on black-box models.” Demand transparency into how the system handles uncertainty, not just the easy cases.

Frequently Asked Questions

What is the failure rate of OCR on business documents?

On clean, typed text, OCR achieves 99%+ character accuracy. On complex business documents with variable layouts, that drops to 60-80%. Handwriting recognition hits around 80% accuracy. But character accuracy isn’t the metric that matters. At 95% on a 2,500-character invoice,125 characters need manual correction.

Can OCR extract data from tables accurately?

Simple fixed-grid tables with single header rows: usually yes. Anything more complex (merged cells, multi-level headers, borderless tables) produces unreliable results.Multi-page table success rates are approximately 70%, and 40-60% of enterprise data lives in tables.

Is IDP just OCR with AI added on top?

No. OCR is one layer (text extraction). IDP adds classification, contextual extraction, confidence scoring, data validation, and continuous learning. The difference is architectural, not incremental. OCR tells you what characters are on the page. IDP tells you what the document means.

When should I switch from OCR to IDP?

Use the decision criteria in the “When to Stay” section above. The clearest signals: variable document formats across vendors, table extraction requirements, field-level accuracy needs, volume exceeding manual QC capacity, or compliance requirements mandating audit trails.

How much does OCR error correction cost?

At $2.56 per invoice correction and 6 minutes 15 seconds per fix, processing 10,000 invoices annually costs $25,600 in correction alone. This excludes downstream remediation, compliance risk, and the team time spent investigating data discrepancies.

How long does IDP implementation take?

Traditional enterprise IDP platforms: 6-12 months. Modern AI-native IDP: 1-2 weeks for standard use cases. Building from APIs: 3-6 months. The range depends on document complexity, integration requirements, and whether you need compliance certifications.

The Real Question Isn’t “OCR or IDP”

The question isn’t which technology is better in the abstract. It’s whether your document pipeline needs text extraction or document understanding. Those are different architectural requirements, and treating one as the other is where most teams get stuck.

If your OCR failures are Tier 3 (character-level issues on otherwise structured documents), tune your OCR. It’s the right tool for that problem. If your failures are Tier 1 or 2 (silent corruption, structural misreads, or table relationships breaking), no amount of OCR configuration can address an architectural gap.

The teams still running OCR on complex, variable documents aren’t making a technology choice. They’re making a cost choice. And the cost of manual correction, silent data corruption, and compounding template maintenance isn’t static. It grows with every new vendor format, every new document type, and every month the pipeline runs.