Counsel pings on Slack at 4:47 PM Thursday: a regulator sent questions about your crawl and wants a one-pager by Friday morning. Robots.txt handling. Terms of service posture. Lawful basis for personal data. Audit trail. Engineers know the words. The pipeline does not say them in code.

This article is for the data engineer or data product manager who has to ship that pipeline. Not the legal essay version. Not the “respect everything, talk to your lawyer” version. The version where every section maps a specific 2024-2025 ruling or regulation to the architectural primitive it forces, with the code, schema, or decision rule. You can land this in a pull request this week.

Four primitives carry the weight: a real robots.txt parser tied to a CI gate, a hash-and-diff loop on each target’s terms of service, a per-jurisdiction policy switch driven from a routing table, and an append-only provenance ledger that your counsel can read without translation. Build those, and a legal review becomes a code review.

Quick Digest:

- Web scraping legal compliance is an engineering problem with a legal acceptance test.

- Four primitives carry the weight: a real RFC 9309 robots.txt parser tied to a CI gate, a hash-and-diff loop on each target’s terms of service, a per-jurisdiction policy switch driven by a routing table, and an append-only provenance ledger that counsel can read without translation.

- This article maps every recent ruling (hiQ v. LinkedIn, Van Buren v. United States, Meta v. Bright Data) to the architectural primitive it forces, and ships the code, schemas, and decision rules to land in your pipeline this week.

The 2024-2025 Legal Landscape, Translated for Engineers

Note: This article is implementation guidance, not legal advice. Engage qualified counsel for jurisdiction-specific questions and any matter touching personal data.

The headline rulings of the last four years did not give scrapers a green light. They gave engineers a coordinate system. Two cases narrowed the federal hacking statute, one case narrowed contract law against logged-off bots, and a fourth signals the next wave of suits toward copyright and anti-circumvention rather than the Computer Fraud and Abuse Act.

hiQ Labs v. LinkedIn and Van Buren: the gates-up rule

In hiQ Labs v. LinkedIn (9th Cir., 31 F.4th 1180, April 2022), the Ninth Circuit reaffirmed that scraping public LinkedIn profiles likely does not violate the CFAA’s “without authorization” prong, because public pages are gate-opened by default. A year earlier, Van Buren v. United States (593 U.S. 374, 2021) applied the same gates-up versus gates-down logic at the Supreme Court level, narrowing “exceeds authorized access” to mean accessing files the user has no permission to reach at all. For an engineer, this collapses to a simple invariant: if your dispatcher only fetches URLs that any unauthenticated user can fetch, the CFAA is not the statute that ends your weekend.

Meta v. Bright Data and X Corp v. Bright Data: terms of service have a session

In January 2024, Judge Edward Chen granted Bright Data summary judgment on its breach-of-contract claim, holding that Facebook and Instagram’s terms of service govern only logged-in use. Bright Data was not a “user” of those services when it scraped public pages while logged off. A parallel ruling in X Corp v. Bright Data later that year reached a similar conclusion and added a copyright-preemption theory: claims based purely on copying public data were preempted by the Copyright Act. The architectural takeaway is sharp. ToS binds a session, not an IP address. Sessions are something your fetch layer either holds or does not, and that distinction has to be a predicate, not a comment in the runbook.

RFC 9309: voluntary protocol, evidentiary weight

The Robots Exclusion Protocol became a real IETF standard in September 2022 as RFC 9309. It is voluntary. It is also the closest thing to a documented expression of the site operator’s intent, and regulators read it that way. France’s CNIL, in its 2024 focus sheet on legitimate interest, explicitly cited robots.txt compliance as a factor in any Article 6(1)(f) balancing test. Ignoring a Disallow does not automatically make a scrape unlawful. It makes a defensible posture harder to maintain, and a thin posture untenable.

GDPR and CCPA in one paragraph each

GDPR Article 6(1)(f) lets you process personal data on a “legitimate interest” basis if you can document necessity, proportionality, and safeguards. Article 14 obliges you to notify data subjects when you obtain their data indirectly (which is what scraping is) within one month, with a narrowly construed disproportionate-effort exemption. Maximum administrative fines reach €20M or 4% of global annual turnover. CCPA and CPRA carve out “publicly available” information lawfully made available from government records or that is not restricted by the consumer. The carve-out is real but narrower than most engineers assume: a profile set to “everyone” is publicly available; one restricted to “friends” is not, even if a logged-in scraper can see it.

Quick Summary. Public pages are gates-up under hiQ and Van Buren. ToS bind a session, not an IP. Robots.txt is voluntary but evidentiary. GDPR turns on Article 6(1)(f) plus Article 14 notice; CCPA turns on the narrow “publicly available” carve-out.

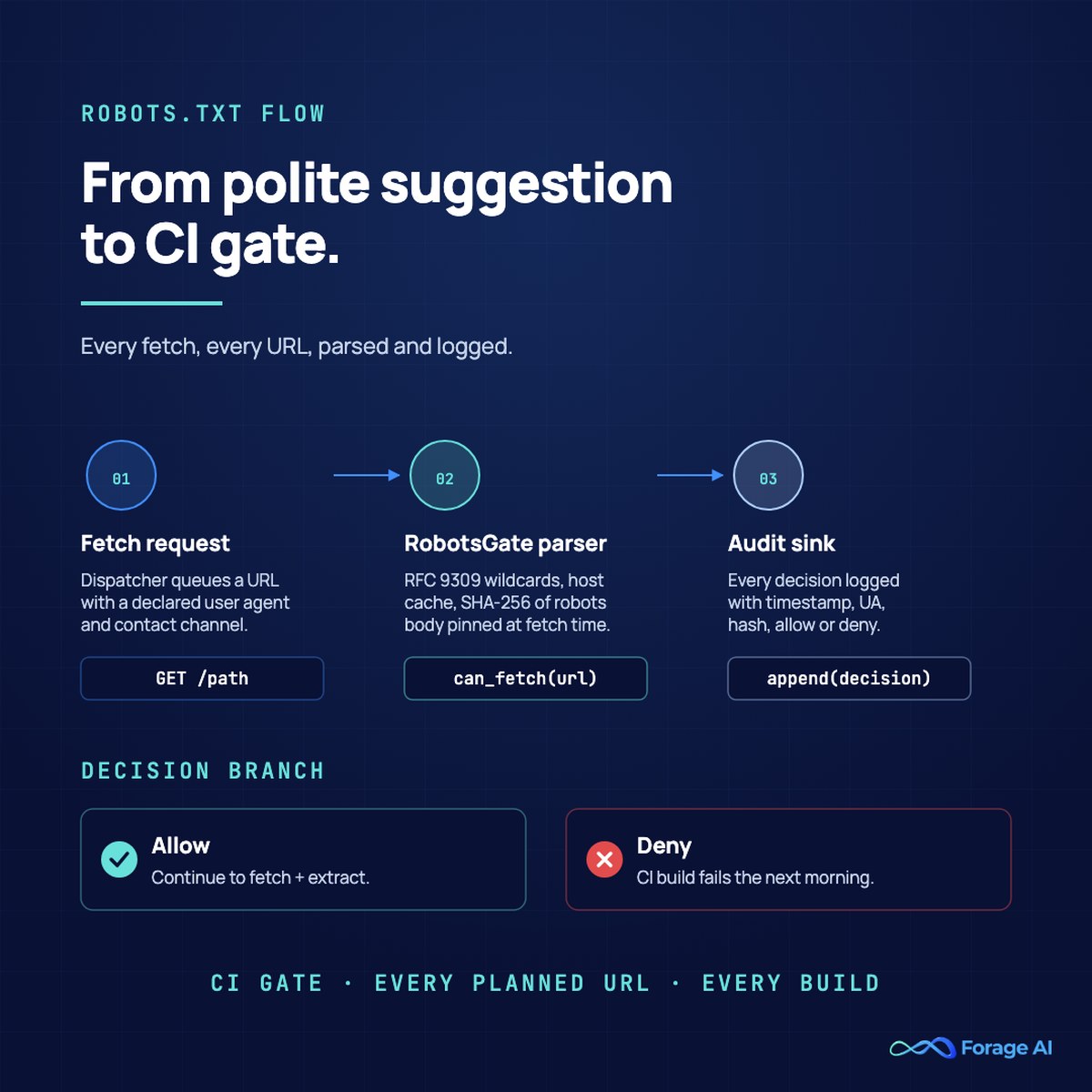

Robots.txt Handling: From Polite Suggestion to CI Gate

The first failure mode is treating robots.txt as a string match in a long-forgotten module. RFC 9309 introduced wildcard semantics, including * an end-of-line $, which string matching gets wrong silently. A 2025 ACM Internet Measurement Conference study confirmed what site operators already knew: scrapers selectively respect robots.txt, and AI search crawlers often do not check it at all. Both behaviors show up in litigation discovery.

The pattern is to use a real parser, log every decision, and gate the build.

# robots_gate.py - wraps urllib.robotparser.

# For stricter RFC 9309 wildcards, swap in robotspy.

from urllib.robotparser import RobotFileParser

from urllib.parse import urlparse

import hashlib, time

class RobotsGate:

def __init__(self, user_agent: str, audit_sink):

self.user_agent = user_agent

self.audit_sink = audit_sink

self._cache: dict[str, tuple[RobotFileParser, str]] = {}

def _load(self, host: str) -> tuple[RobotFileParser, str]:

if host in self._cache:

return self._cache[host]

rp = RobotFileParser()

rp.set_url(f"https://{host}/robots.txt")

rp.read()

# In practice, fetch the bytes yourself and pass them to rp.parse(...)

# so you can hash exactly what the parser saw.

body = b""

digest = hashlib.sha256(body).hexdigest()

self._cache[host] = (rp, digest)

return rp, digest

def can_fetch(self, url: str) -> bool:

host = urlparse(url).netloc

rp, digest = self._load(host)

allowed = rp.can_fetch(self.user_agent, url)

self.audit_sink.write({

"ts": time.time(),

"url": url,

"ua": self.user_agent,

"robots_sha256": digest,

"decision": "allow" if allowed else "deny",

})

return allowed

Two practitioner notes. First, the standard library parser still trails RFC 9309 on wildcard edge cases; if your targets use Allow: /api/*$ patterns, prefer robotspy, which tracks the RFC. Second, the audit sink call is not optional. Without a per-decision log, you cannot reconstruct what the file said the day you fetched it, which is exactly what counsel will ask.

The CI gate closes the loop. Before any new crawl plan ships, run a smoke test that loads each target’s robots.txt, walks the planned URL set, and fails the build if any planned URL is disallowed for your declared user agent. Site operators change their robots.txt; your test catches it the morning after, not the morning of the regulator’s email.

Expert Insight. Treat robots.txt as a machine-readable license, not an etiquette tip. The hash you store the day you fetch a page is the only artefact that lets you prove, months later, that the site operator’s stated intent permitted the crawl. Without it, you are arguing from memory.

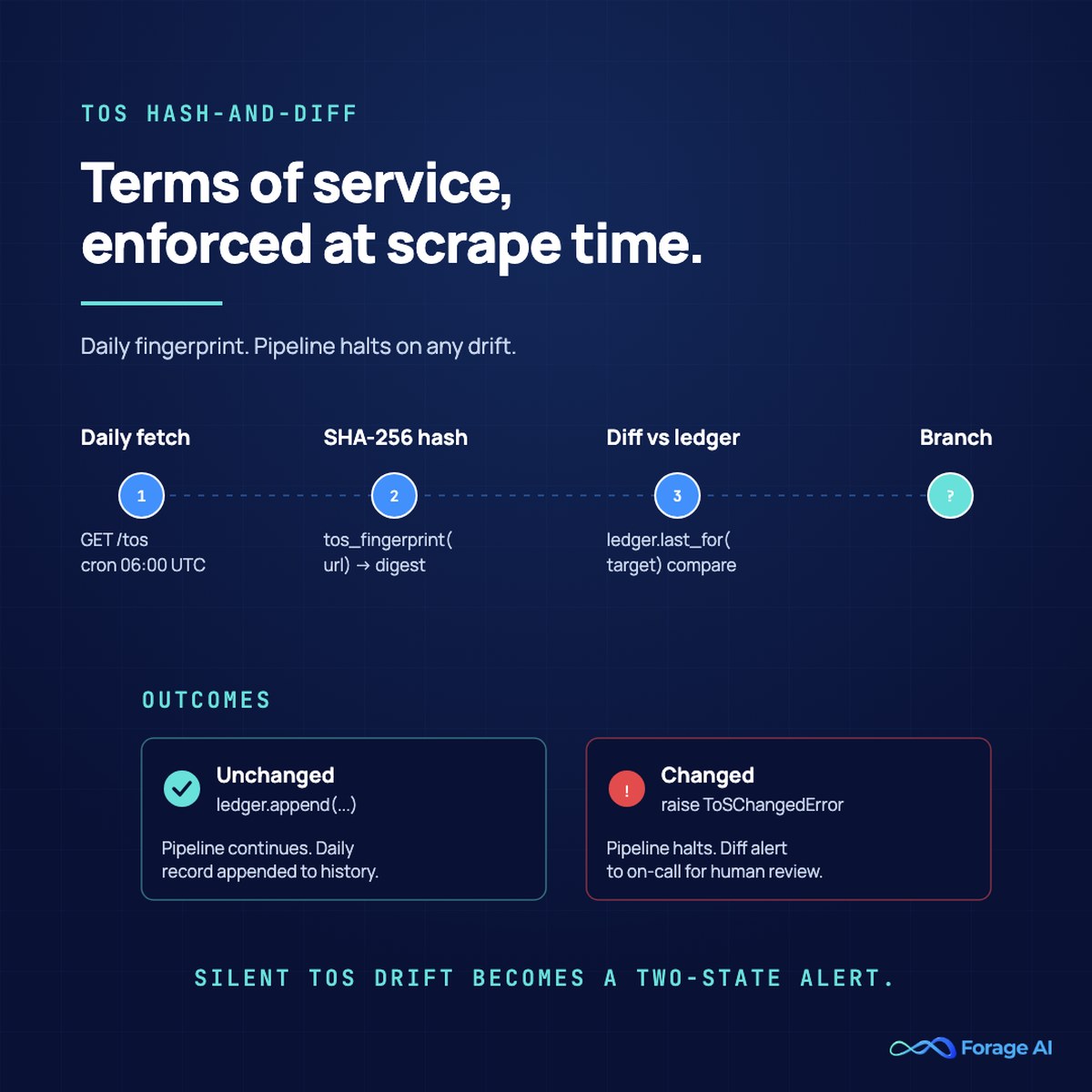

Terms of Service Enforcement at Scrape Time

Bridging from robots.txt to ToS: where robots.txt is a public, machine-readable signal, terms of service are a private contract whose binding force depends on a session that may or may not exist. Most teams read them once at project kickoff and never again. Meta v. Bright Data turned that habit into a real risk surface. The ruling does not say that ToS never matters. It says ToS binds a session, and your fetch layer is the only place that knows whether a session exists.

A defensible architecture builds three things into the fetch path.

First, a session-state predicate at the dispatcher: a fetch is logged off if and only if no cookie, header, or token implies an authenticated session. This sounds obvious. It breaks the moment a developer adds a Cookie: header for caching purposes or a partner integration leaves an OAuth token in the default client. Make the predicate a single function that the dispatcher calls, and have it return a typed value that a logger and a policy switch both consume.

Second, a hash-and-diff loop on the ToS document itself.

import hashlib, requests, datetime

def tos_fingerprint(tos_url: str) -> dict:

r = requests.get(tos_url, timeout=10)

r.raise_for_status()

body = r.text.encode("utf-8")

return {

"tos_url": tos_url,

"fetched_at": datetime.datetime.utcnow().isoformat() + "Z",

"sha256": hashlib.sha256(body).hexdigest(),

"length": len(body),

}

def assert_tos_unchanged(target: str, ledger):

current = tos_fingerprint(target)

last = ledger.last_for(target)

if last and last["sha256"] != current["sha256"]:

ledger.flag(target, current, last, severity="HIGH")

raise ToSChangedError(

f"ToS for {target} changed; pipeline halted for review."

)

ledger.append(target, current)

Run this on a daily schedule. When the hash changes, the pipeline halts that target until a human reviews the diff. That single move converts the most common failure mode (silent ToS drift) into an alert with two known states the on-call engineer can act on.

Third, a decision rule any reviewer can read. Document, for each target domain, the matrix of session state by data type by lawful basis. The matrix is small enough to fit in a wiki page and important enough that it lives in version control next to the crawler config. Your compliance architecture should make this matrix the single place a “can we scrape X” question gets answered, with the deeper reasoning one click away.

Quick Summary. Add a session-state predicate at the dispatcher, run a daily hash-and-diff on every target’s ToS, and write the session-by-data-type matrix into version control. Three artefacts, all small.

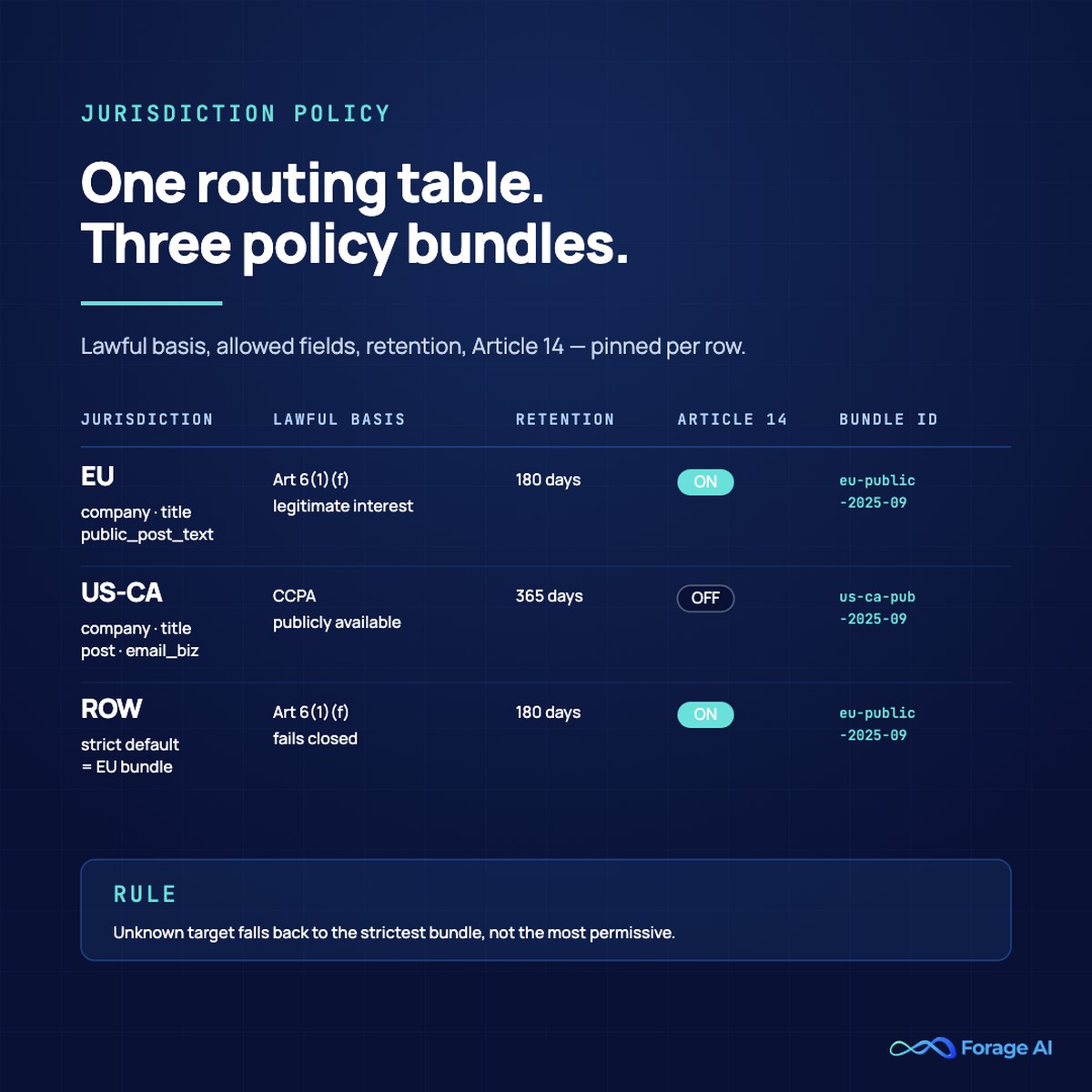

Per-Jurisdiction Policy Switches That Survive a Real Legal Review

Bridging from ToS to jurisdiction: once the dispatcher knows whether a session exists, it has to know which legal regime the target sits inside. Counsel does not care that GDPR is “stricter than CCPA.” Counsel cares that for any given URL your pipeline touches, you can name the lawful basis, the allowed fields, the retention class, and whether an Article 14 notice fires. That is a routing problem, and it has the shape of a routing table.

The routing table maps a target signal (registrant country, TLD, content-language headers, the data type) to a PolicyBundle:

from dataclasses import dataclass

from typing import Literal

LawfulBasis = Literal[

"legitimate_interest_gdpr",

"publicly_available_ccpa",

"consent",

"legal_obligation",

"no_personal_data",

]

@dataclass(frozen=True)

class PolicyBundle:

jurisdiction: str # e.g. "EU", "US-CA", "UK", "ROW"

lawful_basis: LawfulBasis

allowed_fields: frozenset[str]

retention_days: int

article_14_notice: bool # GDPR personal data indirectly obtained

bundle_id: str # version pin, e.g. "eu-public-2025-09"

POLICY_TABLE: dict[str, PolicyBundle] = {

"EU": PolicyBundle(

jurisdiction="EU",

lawful_basis="legitimate_interest_gdpr",

allowed_fields=frozenset({"company", "title", "public_post_text"}),

retention_days=180,

article_14_notice=True,

bundle_id="eu-public-2025-09",

),

"US-CA": PolicyBundle(

jurisdiction="US-CA",

lawful_basis="publicly_available_ccpa",

allowed_fields=frozenset({

"company", "title", "public_post_text", "email_business",

}),

retention_days=365,

article_14_notice=False,

bundle_id="us-ca-public-2025-09",

),

}

def policy_for(target_url, registrant_country, content_lang) -> PolicyBundle:

if registrant_country in {"DE", "FR", "IE", "NL", "ES", "IT", "PL"}:

return POLICY_TABLE["EU"]

if registrant_country == "US" and "ca" in target_url:

return POLICY_TABLE["US-CA"]

# ROW fallback should be the most conservative bundle, not the most permissive.

return POLICY_TABLE["EU"]

Two non-obvious rules. The default fallback for an unknown target should be the strictest bundle in your table, not a permissive one. Defaults travel; if the table is missing a row tomorrow, the strict default fails closed instead of open. And the bundle ID belongs in your provenance ledger on every record, so a future audit can pin a row to the exact policy that produced it.

The Article 14 notice is the hardest mechanical piece, and the one teams skip. CNIL has been explicit that the “disproportionate effort” exemption is narrow; the Polish DPA’s €220,000 fine on a scraper who already had contact details settled the question for that fact pattern. The pragmatic pattern is a notice generator that runs nightly, batches new EU-bundle records, sends notices through a verified channel where one exists, and posts a public notice on a transparency page when no verified channel does. That public-notice fallback is not a guarantee, but it is the documented good-faith effort regulators and counsel both expect to see.

Looking for a managed pipeline with all four primitives baked in? Forage AI runs compliance-first extraction with provenance, jurisdiction routing, and audit trails included by default. Talk to our team: Forage AI compliance approach.

Expert Insight. A jurisdiction routing table is the single artefact most teams underbuild. The right size is small (four to six bundles), version-pinned, and enforced at the dispatcher before a single byte is fetched. If counsel cannot read the table on one screen, it is the wrong table.

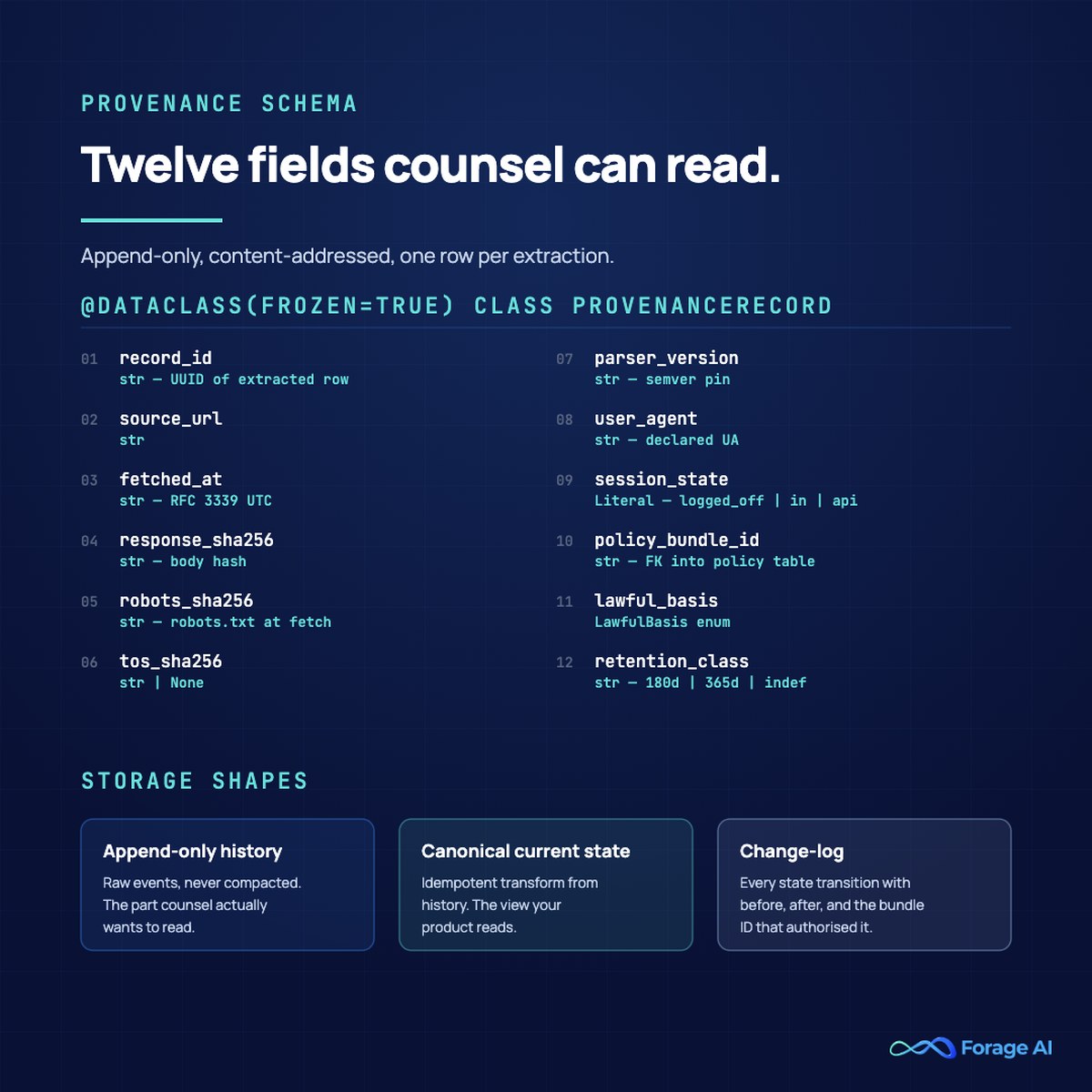

The Audit Trail: Provenance Schema That Counsel Can Read

Bridging from policy to provenance: the policy bundle answers “should we have fetched this?” The audit trail answers “can you prove what you fetched, what the rules said, and which bundle decided?” A defensible audit trail is not “we have logs.” It is an append-only, content-addressed ledger where every extracted record points at the bytes, decisions, and policies that produced it. The schema below is the smallest viable shape; teams that ship this rarely regret it.

@dataclass(frozen=True)

class ProvenanceRecord:

record_id: str # UUID of the extracted row

source_url: str

fetched_at: str # RFC 3339 UTC

response_sha256: str # hash of the HTTP response body

robots_sha256: str # hash of robots.txt at fetch time

tos_sha256: str | None # hash of the ToS document at fetch time

parser_version: str # semver of the parser

user_agent: str

session_state: Literal["logged_off", "logged_in", "api_key"]

policy_bundle_id: str # FK into your policy table

lawful_basis: LawfulBasis

retention_class: str # e.g. "180d", "365d", "indefinite_business"

extracted_fields: list[str] # fields the policy permitted

Three reasons this schema is worth the storage cost. First, the response hash plus the parser version lets you reproduce any extraction bit-for-bit, which is the only way to defend against a “you fabricated this” claim. Second, the robots and ToS hashes pin the site operator’s stated intent at fetch time, which is exactly what regulators reach for when assessing a legitimate-interest balancing test. Third, the policy bundle ID and lawful basis travel with the record forever, which means a deletion request months later can be answered by a single SQL query rather than a forensic project.

Storage shape: an append-only raw history table, a canonical current-state table populated by an idempotent transform, and a change-log that records every state transition. The append-only history is the part counsel actually wants. Do not let an over-eager DBA “compact” it.

Quick Summary. Twelve fields, append-only, content-addressed. The response hash plus parser version reproduces the extraction; the robots and ToS hashes pin intent; the bundle ID pins policy. Everything else is commentary.

Case-Law to Code Mapping

Bridging from schema to direct mapping: if every primitive above feels like a separate engineering choice, this table is the connective tissue. Rulings are not abstractions; they pin down where in your codebase a primitive belongs.

| Ruling or rule | Architectural primitive it forces | Where it lives in code |

|---|---|---|

| hiQ v. LinkedIn, Van Buren v. US | Public-only crawl flag at the dispatcher | Dispatcher.fetch_plan() checks is_public(url) before queueing |

| Meta v. Bright Data, X Corp v. Bright Data | Session-state predicate at the fetch layer | session_state(request) returns a typed value |

| RFC 9309 + CNIL focus sheet | Real robots.txt parser plus per-decision log | RobotsGate.can_fetch() with audit sink |

| GDPR Art 6(1)(f) and Art 14 | Lawful-basis tag and notice generator | PolicyBundle.lawful_basis, nightly notice job |

| CCPA “publicly available” | Explicit publicly_available_ccpa basis pinned per record | PolicyBundle.lawful_basis for US-CA bundle |

| Reddit v. Perplexity (DMCA §1201) | No-circumvention rule: never break paywalls or anti-bot tokens | Dispatcher refuses URLs that required CAPTCHA solve or token theft |

The map is the deliverable for your one-pager. Counsel reads the left column. Engineering owns the right two.

Failure Modes That Get Pipelines Subpoenaed

Four failure modes recur in litigation discovery. Each has a code-level fix, and each is the kind of thing that looks fine in normal operation and catastrophic in cross-examination.

Silent retry storms after a 429. A rate-limited fetch path that retries without exponential backoff or a circuit breaker reads, in logs, exactly like a denial-of-service attempt. Implement decorrelated jitter, a per-host concurrency cap, and a hard ceiling that pages on-call before it reaches the magnitude that would trigger an abuse report.

Mixed crawler identities across IPs. Rotating user agents while rotating IPs without declaring a contact channel reads as evasive. Pick one declared user-agent string per project, include a contact URL in it, and rotate IPs only with a documented rate-limiting purpose, not an identity-laundering one.

Stale ToS hash, no diff. If you cannot prove what the ToS said the day you scraped, you cannot prove your interpretation was reasonable. The hash-and-diff loop above is the smallest move that closes this gap.

No Article 14 mailbox. A pipeline that processes EU-bundle records without a deletion-request mailbox and a documented response SLA is, mechanically, in violation. Stand up the mailbox before the first production EU crawl, not after the first request.

Expert Insight. The four failure modes share a trait: each looks fine in normal operation and catastrophic in cross-examination. The right time to fix them is the quarter before they matter.

Forage AI’s Compliance-First Approach

Forage AI’s managed extraction pipelines build these primitives in by default: real RFC 9309 parsing on every fetch, hash-and-diff on terms of service per target, jurisdiction-routed policy bundles with versioned IDs, and append-only provenance ledgers your counsel can query directly. Together these primitives are what turns ad-hoc scraping into automated data collection a regulator can audit on demand. This is what compliance-first data extraction automation looks like in practice: the legal review surface is engineered in, not bolted on after the first regulator email. The same primitives carry into adjacent workflows where counsel review is the gate, including contract data extraction for legal teams that need provenance and accuracy held to the same standard, and high-volume ecommerce data scraping programs where catalog, pricing, and review extraction, including downstream price intelligence feeds and competitor price tracking, must clear the same robots.txt, ToS, and jurisdiction gates before a single product page is fetched. Compliance is the gate that lets teams adopt modern data extraction services without rebuilding the legal-review surface in-house. The Forage QA layer is three times the industry-average size, and every extraction carries the bundle ID, lawful basis, and retention class that produced it. Teams ship faster because the legal-review surface is built once, by the partner, instead of rebuilt every quarter by an engineering team that would rather be working on data products.

Compliance-first web scraping at enterprise scale, with audit trails, jurisdiction routing, and Article 14 workflows handled for you. See how Forage AI does it: Forage AI compliance approach.

Frequently Asked Questions

Is web scraping legal in the US in 2026? Scraping publicly accessible web pages is generally not a CFAA violation in the Ninth Circuit and most other circuits after Van Buren. State common-law claims, copyright, and DMCA §1201 anti-circumvention remain live theories, especially where a scraper bypasses anti-bot measures.

Does respecting robots.txt give legal cover? Not by itself. Robots.txt is a voluntary protocol under RFC 9309. Compliance is a strong positive signal in regulatory and judicial reasoning; ignoring it is a strong negative signal. It is necessary but not sufficient.

Do platform terms of service bind a logged-off scraper after Meta v. Bright Data? Generally not, when the scrape is genuinely logged off and the data is public. The architectural commitment that gives this defense its weight is a session-state predicate at the fetch layer that proves no session existed.

What lawful basis covers scraping personal data under GDPR? In practice, only Article 6(1)(f) legitimate interest is workable for scraping at scale, and only with a documented Legitimate Interest Assessment covering necessity, proportionality, and safeguards. Consent is impractical at scrape time.

When does Article 14 notice apply, and how do I scale it? It applies whenever you obtain personal data indirectly, which is exactly what scraping does, and the deadline is one month. Scale it with a nightly notice job, a verified-channel send (if one exists), and a public-transparency page (if one does not).

Does CCPA’s “publicly available” exemption cover scraped social profiles? It covers profiles that the consumer has not restricted to a specific audience. A profile set to “friends only” is not publicly available, even if a logged-in account can see it.

Can I scrape data behind a CAPTCHA or anti-bot system? Treat any technical protection measure as a hard stop. Bypassing it exposes you to DMCA §1201 anti-circumvention liability, which carries statutory damages far higher than those for a contract-breach claim.

What does a defensible audit trail actually look like? An append-only ledger in which every extracted record includes the source URL, fetch timestamp, response hash, robots.txt hash, ToS hash, parser version, policy bundle ID, lawful basis, and retention class. If any of those fields is missing, the trail is not yet defensible.

Conclusion

Web scraping legal compliance is an engineering problem with a legal acceptance test. A real RFC 9309 parser with an audit sink answers the robots.txt question. A hash-and-diff loop on terms of service plus a session-state predicate answers the contract question. A jurisdiction policy switch with a strict default and an Article 14 notice generator answers the GDPR question. An append-only provenance ledger answers every other question counsel will ever ask. Build those four primitives once, and a legal review becomes a code review you can pass on the first pass, every quarter, without rewriting the pipeline.

Sources

- 9th Circuit, hiQ Labs v. LinkedIn opinion: https://law.justia.com/cases/federal/appellate-courts/ca9/17-16783/17-16783-2022-04-18.html

- Library of Congress CRS report on Van Buren v. United States: https://www.congress.gov/crs-product/LSB10616

- Quinn Emanuel client alert on Meta v. Bright Data: https://www.quinnemanuel.com/the-firm/news-events/client-alert-meta-v-bright-data-significant-decision-for-web-scraping-industry/

- CNIL focus sheet on legitimate interest and web scraping: https://www.cnil.fr/en/legal-basis-legitimate-interest-focus-sheet-measures-implement-case-data-collection-web-scraping

- IETF RFC 9309 tracking issue (Python cpython): https://github.com/python/cpython/issues/138907

- ACM IMC 2025: scrapers selectively respect robots.txt: https://dl.acm.org/doi/10.1145/3730567.3764471

- Farella Braun + Martel on revised CCPA regulations: https://www.fbm.com/publications/data-scraping-under-the-revised-ccpa-regulations/

- Lowenstein Sandler on Meta v. Bright Data implications: https://www.lowenstein.com/news-insights/publications/client-alerts/meta-v-bright-data-ruling-has-important-implications-for-webscraping-activities-by-investment-advisers-im

Related Articles

- A Guide To Modern Data Extraction Services in 2026. The pillar overview of the modern data extraction services landscape, from build-versus-buy to compliance-first delivery.

- Legal and Ethical Issues in Web Scraping: What You Need to Know. The companion awareness guide covers the legal landscape end-to-end.

- Debunking Common Myths About AI-Powered Web Data Extraction. Practical compliance guidance for AI-driven extraction programs.

- Solving the AI Training Data Crisis With Compliant Web Scraping. Compliance frameworks for AI training data programs.

- How to Legally Extract Social Media Data at Scale. Platform-specific compliance for social data programs.