Enterprise Evaluation Checklist: How to Choose the Right Data Extraction Company

Your shortlist is down to two or three vendors. They all have enterprise logos in the deck, all claim 99%+ accuracy, and all say “millions of sources.” The feature comparison spreadsheet has converged to a tie. The buying committee is split between the one the CFO likes and the one the engineering lead trusts.

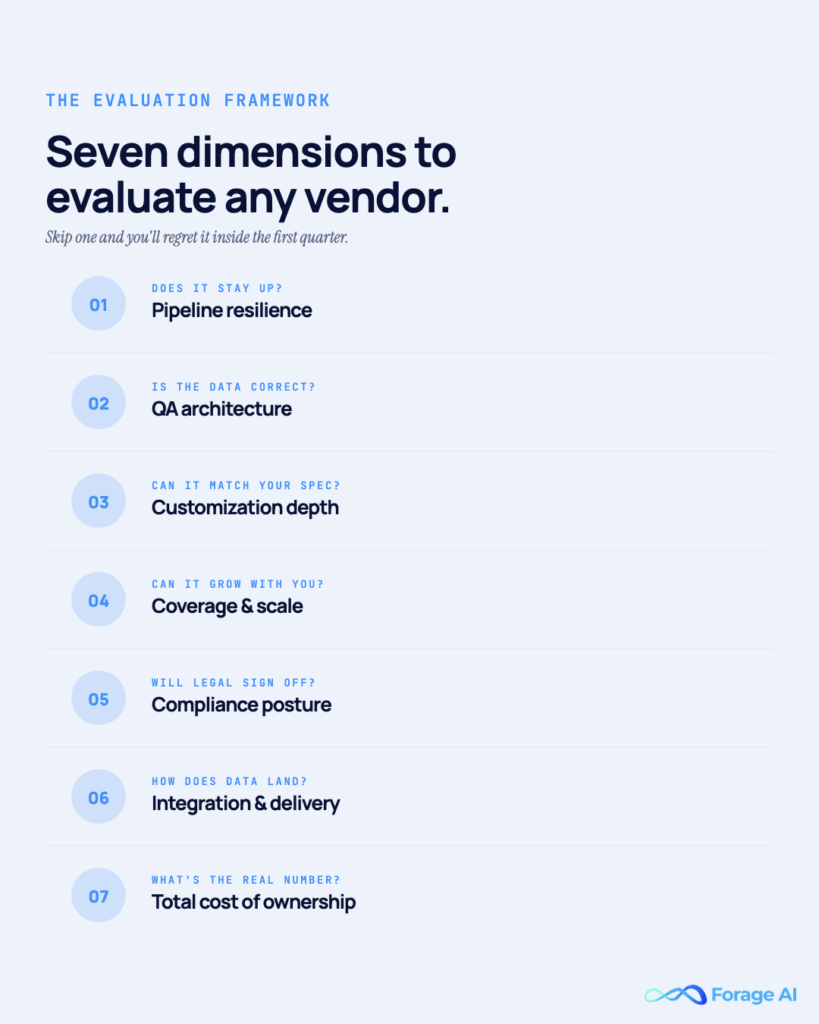

This is where most data extraction evaluations quietly go wrong. Not because anyone’s making a bad-faith argument, but because the evaluation framework being used is generic vendor-selection content from IT procurement playbooks. Those playbooks don’t surface the dimensions that actually determine whether a data extraction partnership delivers at 18 months: pipeline resilience, QA architecture, data ownership, customization depth, coverage breadth, partnership model, and compliance.

This article provides a framework for evaluating data extraction companies. Seven dimensions, a printable scorecard weighted by buying-committee role, and a compliance gate that screens out vendors who haven’t caught up to 2026. Written for the champion building the case for the Economic Buyer, the Technical Evaluator, and the Legal/Compliance Influencer. Not for the procurement lead running a spreadsheet.

QUICK DIGEST

- Seven data-extraction-specific dimensions that generic vendor checklists systematically miss

- A weighted scorecard mapped to buying-committee roles (CFO, Engineering Lead, Legal/Compliance, Primary Champion)

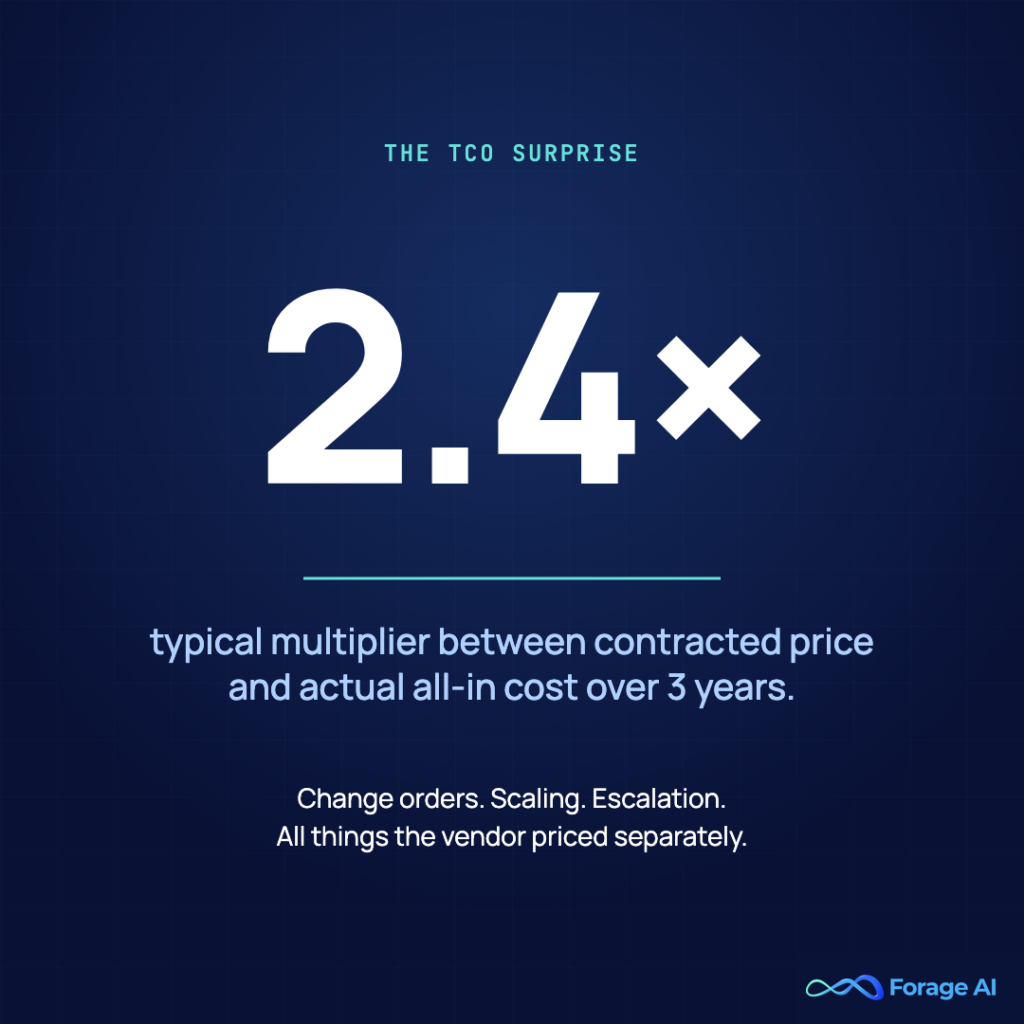

- TCO framed against the true in-house baseline (~$250K year 1, $375K year 3), not against zero cost

- A 2026 compliance pass/fail gate (SOC 2, GDPR, HIPAA, EU AI Act) applied before dimension scoring

Why Generic Vendor Checklists Miss the Point for Data Extraction

The average vendor-evaluation checklist has 7 to 10 categories: capabilities, cost, security, implementation, support, references, and contract terms. Scored 1–5. Weighted by some formula. This framework can be used to evaluate CRM software, HRIS platforms, or ERP systems. It does not work for data extraction.

The problem is that “capabilities” collapses the dimensions that separate functional data extraction partners from those that unravel at month 18 into a single category. Pipeline resilience is not a feature. QA architecture is not a capability claim. Data ownership is not a setting. These are structural, architectural, and organizational properties of the vendor, and generic evaluation frameworks fail to surface them.

The symptom is consistent. Two vendors score nearly identically on a generic 10-category rubric. The decision collapses to price or relationship. Eighteen months into the contract, one is performing at 94% accuracy on your specific data, and the other is at 71%, and you can’t easily migrate without absorbing the switching cost of a multi-year pipeline rebuild. Industry benchmark studies consistently find that the vast majority of enterprise leaders have experienced pipeline failures that have delayed analytics or AI initiatives. Most of those failures trace back to the wrong vendor decision, made with the wrong evaluation framework.

What Vendor Demos Won’t Surface

Vendor demos run on clean, standardized data: neat PDFs, predictable website layouts, stable source structures. Your production reality is negotiated counterparty contracts, scanned, signed documents, websites that redesign themselves quarterly, and schema drift without warning. The demo shows you the vendor’s best-case scenario. The evaluation framework has to tell you what happens at the median and the tail.

Worth knowing:

- Industry 2026 evaluation guidance consistently points to the same failure mode: buyers compare feature lists instead of demanding proof of outcomes

- Generic vendor-evaluation frameworks weight capabilities, cost, and security; data extraction evaluations should add pipeline resilience and QA architecture as first-order dimensions

- The cost of a wrong vendor decision over a 2–3 year contract regularly exceeds $500K in direct costs plus switching costs

Why should I care about a data-extraction-specific checklist if my procurement team has a standard template?

Because the standard template is designed for broadly comparable software categories. Data extraction is not generic IT software. The production failure modes that determine long-term value (source changes, schema drift, data ownership clauses) aren’t included in a standard evaluation rubric. Before applying the seven dimensions, confirm you’re actually buying rather than building — see our Build or Buy Strategic Guide.

Dimension 1: Pipeline Resilience — The Failure Mode Your Evaluation Probably Misses

Pipeline resilience is how the vendor’s system handles source changes, schema drift, anti-bot updates, authentication renewal, and the silent failures that don’t trigger error logs. It has the highest production impact and the lowest demo visibility.

What to ask: What happens when a source website changes structure? Do pipelines self-heal, or do they require manual intervention? What’s your SLA on source-change recovery? Can we see documentation of how failures are classified and routed? What percentage of source changes are handled automatically versus requiring engineering intervention?

What to look for: Classified failure handling (a 403 response is not treated as a selector miss; an empty DOM is not treated as a timeout). AI-regenerated selectors at setup, not per-page inference. Schema validation at write time. Fallback selectors on every critical field. Observability at the data shape level, not just job status.

Why this matters at the committee level: If extraction supports a paying-customer product or feeds AI systems, resilience is the dimension most likely to determine whether the partnership works at 18 months. A 2026 annual state of data quality survey reports that data teams spend 60–80% of their time maintaining fragile pipelines. The vendor you pick either absorbs that burden architecturally or passes it back through ticket volume.

The “It Worked in the Demo” Trap

Vendor demos standardize away the real problem. Request a live test against your production-complexity data, including degraded scans, negotiated contracts, and at least five counterparty variations, before signing. A system that hits 95% on clean demos and drops to 70% on your archive is not evaluating as advertised. See our breakdown of how enterprise systems actually scale reliably and why most teams regret building this in-house for the failure-mode inventory.

Worth knowing:

- In-house extraction TCO typically runs $250K year 1, $300K year 2, $375K year 3 for a 100-source pipeline (see our 2026 Budget Analysis); 60–70% of that is maintenance, not build

- A publicly cited enterprise case reported 40% of data-engineering hours weekly on scraper fixes alone

- Pipeline resilience is where Economic Buyers and Technical Evaluators most often disagree. Scoring both sides against the same dimension forces the conversation.

How do I evaluate pipeline resilience before signing?

Request documented failure-recovery procedures, run a live test against your own degraded and amended data (not vendor-provided samples), require a contractual SLA on self-healing behavior, and reference-check customers specifically on source-change incidents.

Dimension 2: QA Architecture — Accuracy Math, Not Accuracy Claims

Every vendor claims high accuracy. The question that matters is what architecture produces that number, not what the demo-set measurement shows.

What to ask: What’s your QA-to-delivery team ratio? Do you route low-confidence extractions to human reviewers, and at what confidence thresholds? Is QA a separate organizational function from extraction operations? Do corrections feed back into your models through reinforcement learning from human feedback? Can we see an anonymized QA incident report?

What to look for: A layered QA approach where every extraction passes through both automated checks and human expert review. Organizational separation between extraction operators and QA reviewers. Confidence scoring per field, not binary. Domain-specialist reviewers rather than generalists. Feedback loops at a cadence that actually moves model accuracy.

Why this matters at the committee level: Gartner puts the average annual loss from poor data quality at $12.9M per organization. IBM puts the US aggregate cost at $3.1 trillion. A vendor with a 3x QA-to-delivery team ratio can deliver materially better data quality at scale than one that claims “human review” as a feature. The difference compounds over millions of extractions.

Forage operates at this tier. Every extraction passes through both automated checks and human expert verification, a 200% QA approach. The QA team is three times the industry average for its size relative to the delivery team. Corrections feed back into models through reinforcement learning from human feedback, and domain expertise compounds across 12+ years of operational history.

When “Human in the Loop” Is Just a Spot-Check

Spot-check review of low-confidence outputs, without routing rules, trained reviewers, or feedback loops, is a marketing claim, not an architecture. Real HITL requires all three. For the architectural deep-dive, see Human-in-the-Loop Data Extraction.

Worth knowing:

- Chad Sanderson’s Data Contracts (O’Reilly, 2024): detection-based data quality doesn’t scale; prevention must be enforced in CI/CD, not downstream as dashboards

- Barr Moses: “AI will only ever be as useful as the first-party data that powers it.”

- A 97% accurate pipeline running 100,000 extractions daily produces 3,000 wrong records per day, enough to compound into business-decision impact within weeks

What’s the difference between “human review” as a feature and as an architecture?

A feature is a bullet point on the datasheet. An architecture routes extractions by confidence score, has trained domain-specific reviewers, and closes the loop with feedback into the model. Ask to see the workflow in practice, not the claim in a deck.

Dimension 3: Data Ownership and Governance — Who Owns What, Forever?

The single most underweighted dimension in most evaluations. Buying committees assume ownership; few write it into the contract.

What to ask: Do we retain full contractual ownership of all extracted data? Can you aggregate our data with other clients’ data for any purpose, including model training or aggregated market reports? What are the rights to delete upon contract termination? Is data lineage exportable independent of your platform? Is on-premise deployment available for regulated data?

What to look for: Data non-resale written into the contract, not promised in the deck. Full client retention of all extracted data. Lineage metadata portable in standard formats. On-premise deployment option for sensitive environments. A clear exit clause with reasonable data portability.

Why this matters at the committee level: For data product builders, a vendor aggregating your extracted data into market reports is a direct competitive conflict. For regulated verticals (BFSI, Healthcare), GDPR rights to delete and audit must extend to the vendor. For anything containing PII or PHI, vendor model training on your data is a compliance incident waiting to surface. IBM’s 2025 Institute for Business Value research found that 43% of COOs identify data quality as their top data priority, with 7% of organizations losing more than $25M annually to data-quality failures. Data ownership directly feeds into data quality accountability.

The Aggregated-Data Loophole

“We don’t resell your data” often means “we don’t resell raw records, but we train models on your data and publish aggregated insights.” The clause language matters. Ask explicitly: Does the contract permit any use of our data beyond direct delivery to us? The answer should be no.

Worth knowing:

- Raconteur’s 2026 EU AI Act audit guide: “In the 2026 compliance environment, screenshots and declarations are no longer sufficient. Only operational evidence counts.”

- Legal/Compliance weights this dimension highest in the buying committee. Flag any ambiguous contract language early, before the Economic Buyer has finalized commercial terms.

Why is data ownership the most underweighted dimension?

Because everyone assumes it. Most buying committees never ask to see the specific clause language on non-resale, aggregation rights, or model training. The assumption holds until the renewal cycle, when the ownership gap becomes a bargaining point against you.

Dimension 4: Customization Depth — Template or Tailored?

“Custom” is one of the most abused terms in vendor marketing. A vendor can be customized in the sense of letting you map template fields to your own schema. Or custom-built in the sense of pipelines designed around your exact fields, business rules, taxonomies, and client dictionaries. These are different architectures with different cost profiles and different failure modes.

What to ask: Are pipelines template-based, schema-mapped, or custom-built to our business rules? Can you integrate with our client dictionaries and data taxonomies? What happens if our schema evolves mid-contract? Can you show me how you handle a field we define that doesn’t exist in your standard schema?

What to look for: Pipelines designed around client fields and business rules, not mapped to vendor templates. Integration with client dictionaries, taxonomies, and ontologies. Flexible delivery formats (CSV, JSON, XML, direct API) matching client data infrastructure. Niche-field capability, meaning the ability to extract highly specific nested data that doesn’t appear in any standard schema.

The Customization Quadrant

| Quadrant | What it is | Who it fits |

| HIGH SCALE / LOW CUSTOM | Standardized schemas, fixed fields, commodity extraction | Teams with generic data needs and high volume |

| LOW SCALE / HIGH CUSTOM | Custom pipelines but limited volume (DIY or niche) | Small-scale specialized extraction needs |

| LOW SCALE / LOW CUSTOM | Pre-built datasets, download-and-use | Teams with standard off-the-shelf requirements |

| HIGH SCALE / HIGH CUSTOM | Custom-built pipelines for millions of sources of volume, the rarest market quadrant | Enterprise data extraction at production scale |

Why this matters at the committee level: If your data needs are specific to your product or vertical, template-based extraction will lose 10–20% of the fields that actually matter. If your schema will evolve over the contract term, only custom-built pipelines can avoid rework with every change. See our treatment of custom web data extraction versus pre-built tools for the distinction between the two.

When “Customizable” Means “Remapped Templates”

Vendors often claim customization but deliver templated extraction with field-name mapping. Ask to see a custom field added live during evaluation, with the workflow end-to-end, from schema change to production extraction. The time and effort required tells you which quadrant the vendor actually operates in.

Worth knowing:

- Per Mordor Intelligence, managed web-scraping services are growing at ~15.1% CAGR versus ~14.2% for web-scraping software (2025–2030). Organizations are actively shifting toward customized managed extraction.

- The Primary Champion (Head of Data/Product) typically weighs customization highest because they’re closest to the gap between templated extraction and business-rule reality

What does “custom” actually mean in data extraction?

Pipelines built around your business rules and schema, with the ability to integrate your domain taxonomies and evolve as your requirements change. If the vendor’s “customization” is field-name mapping on a standard schema, that’s templated, not custom.

Dimension 5: Coverage and Scale — Web + Documents + Formats

Most enterprise data extraction needs span multiple modalities. A vendor specialized only in web scraping cannot handle your contract PDFs. A document-extraction specialist cannot handle competitor monitoring. A social-media specialist handles neither. Coverage breadth is a selection criterion, not a nice-to-have.

What to ask: Can you handle millions of sources across the web, documents, and APIs? What’s your current production scale, in websites, documents, and profiles? Can you handle our volume growth (5x, 10x) without re-architecting? What document formats and file-degradation types do you handle (faded scans, handwritten annotations, 2,000+ page contracts)? What industries have you delivered in and at what volume of domain-specific training data?

What to look for: Production-scale proof, not capability claims. Multi-modal coverage (web + documents + APIs) in the same vendor. Vertical-specific training data. Document-extraction capabilities (enhanced OCR, domain-trained ML for degraded content). Years of operational history. Scale headroom for growth without re-negotiation.

Forage operates at this scale. Forage crawls 500M+ websites, parses 10M+ documents, monitors 5M+ professional profiles, and has 12+ years of operational experience across 15+ industries. Three pillars (Web Data Extraction, Intelligent Document Processing, and AI-Powered Solutions) mean one vendor relationship spans the full modality range, rather than stitching three specialists.

The “Millions of Sources” Claim vs Production Reality

Capability claims are often theoretical. Ask for production-volume numbers, years of operation at the current scale, and reference customers with your volume. A vendor that has “architecture designed to scale to millions” but is running at thousands today hasn’t actually validated the architecture at your scale. For document-extraction specifically, see our comprehensive IDP guide and the financial services companion for vertical-specific context.

Worth knowing:

- Corporate legal GenAI adoption more than doubled year-over-year, from 23% in 2024 to 52% in 2025 (ACC + Everlaw Generative AI’s Growing Strategic Value for Corporate Law Departments 2025), signaling broader enterprise AI demand that drives extraction-volume growth

- The Technical Evaluator typically weights coverage and scale highest. Production scale matters more than architectural elegance at the committee table.

- Domain-trained models compound over time: the more contracts processed in a vertical, the better the extraction gets at that vertical’s specific patterns

How do I validate “millions of sources” as a real claim?

Ask for current production volume, years operating at that volume, and three reference customers in your industry and size bracket. A vendor that can’t produce all three has aspirational scale, not proven scale.

Dimension 6: Partnership Model — Vendor Relationship or Dedicated Team?

The biggest gap in most vendor evaluations. Ticket-based support creates a chain of hand-offs every time a source changes or a new requirement emerges. Dedicated-team models assign the same engineers from day one, quarter after quarter, building compounding domain expertise specific to your business.

What to ask: Is this a ticket-based support model or a dedicated team? Will we have the same engineers on our account across the contract? Does your team participate in our data strategy discussions, or only respond to requirements we send you? What’s your time-to-launch? Beyond delivering data, does your team proactively surface new data sources or opportunities?

What to look for: Dedicated team model from day one, not just post-go-live. Continuity, where the same engineers work the account quarter-to-quarter. Proactive consultation on data strategy, not reactive ticket response. Rapid time-to-launch (typically 1–2 weeks for enterprise IDP). Partnership language in the contract, not just SLAs.

Forage operates as a partner, not a vendor. Forage assigns a dedicated team from day one. The same engineers manage your pipelines through the contract lifecycle, building domain expertise specific to your business. 100+ data experts across 15+ industries means the pattern in your vertical has probably been encountered before it hits your backlog. The team participates in your data strategy discussions rather than just responding to tickets.

For the managed-vs-self-service category distinction, see Managed vs Automated Web Scraping Services.

What “Dedicated Support” Means vs What Teams Experience

A “Dedicated account manager” often means a single point of contact in a support queue. That’s different from “the same engineers run your account.” Ask to speak with the actual engineers who would run your account, not just the sales engineer or the account manager. Their familiarity with your industry and use case tells you what the partnership will actually feel like.

Worth knowing:

- Market shift: services are growing faster than software in the extraction space. A signal that organizations are actively leaving self-serve tools for managed partnerships at enterprise scale.

- Primary Champions’ weight partnership model is highly valued because they live with the vendor relationship. Economic buyers weigh it less because they don’t see the day-to-day operational cost of ticket friction.

Is “dedicated team” a meaningful distinction or just vendor-speak?

Meaningful. Ticket-based support scales linearly with your volume of requests. Every source change is a new ticket. Dedicated-team models scale with accumulated domain expertise. The tenth request on the same data type is much cheaper than the first.

Dimension 7: Compliance Architecture — SOC 2, GDPR, HIPAA, EU AI Act

On generic checklists, compliance is usually the last category. For data extraction in 2026, it should be a pass/fail gate before anything else is scored.

What to ask: What compliance certifications do you currently hold? Can you share attestation reports? Do you maintain automatic tamper-resistant logging of every extraction event? Can you provide the technical documentation for Article 11 of the EU AI Act? What’s your GDPR data-subject-rights support, including actual deletion and not just access controls? For BFSI and Healthcare specifically, is it SOC 2 Type II? HIPAA-compliant workflows?

What to look for: SOC 2 Type II, not Type I. GDPR with verifiable data subject rights support. HIPAA for regulated healthcare data. EU AI Act Article 11 documentation if extraction feeds high-risk AI deployed to EU users. CCPA for California consumer data. On-premise deployment available for regulated environments.

The 2026 EU AI Act specifics: the remaining provisions apply on August 2, 2026. Penalties for high-risk AI violations range from €15M or 3% of global annual turnover to €35M or 7% for prohibited AI practices (per DLA Piper’s 2026 enforcement analysis and Secure Privacy’s 2026 compliance guide). Jones Walker’s 2026 predictions: legal ops will own AI governance across the enterprise, raising the internal bar on vendor compliance maturity.

Compliance as Configuration vs Compliance as Architecture

Most generic IDP platforms treat compliance as configuration (toggle encryption on, check a SOC 2 box). The gaps show up in audit granularity (can you trace a specific field back to a specific model version?), data-subject-rights support (can you verifiably delete PII from training data?), and tamper-resistance of logs (are they cryptographically signed?). For regulated industries, these are hard failures under the 2026 audit frameworks.

Worth knowing:

- Compliance failure is a pass/fail condition. A vendor that can’t produce tamper-resistant audit logs or lacks SOC 2 Type II attestation shouldn’t make it to capability scoring.

- Legal/Compliance Influencer weighs this dimension at 100% in the buying committee. If compliance fails, nothing else matters.

- Raconteur 2026: “only operational evidence counts.” Screenshots and declarations no longer suffice.

What compliance requirements should I impose on a data extraction vendor in 2026?

SOC 2 Type II, GDPR, HIPAA (for healthcare-adjacent data), and EU AI Act Article 11 documentation if your extraction feeds high-risk AI deployed to EU users. Penalties for high-risk AI violations range from €15M to 3% of global turnover. The compliance gap is a business risk, not just a legal one.

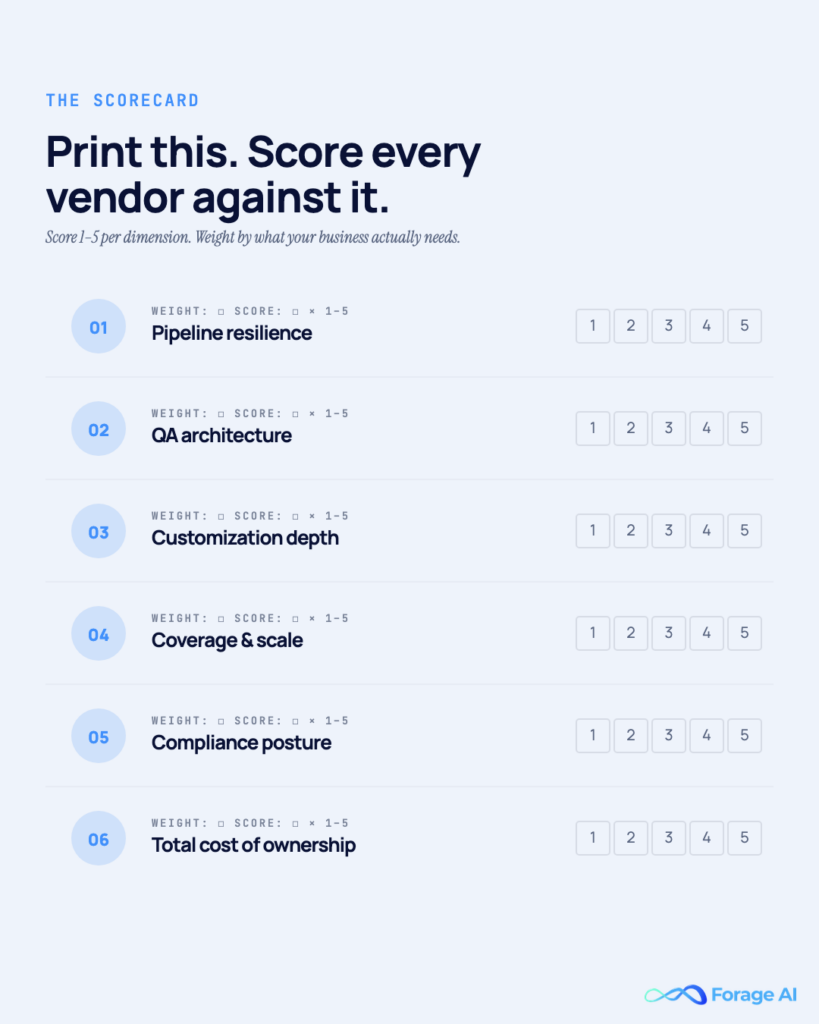

The Enterprise Data Extraction Evaluation Matrix

The scorecard. Print this, bring it to the buying-committee meeting, and score each shortlisted vendor.

The Matrix Structure

- Compliance (Dimension 7): PASS/FAIL gate. Vendor must clear before the rest of the matrix is applied.

- Dimensions 1–6: scored 1–5 per vendor, per dimension.

- Role-weighted totals computed for each buying-committee role.

Role-Based Weighting

| Committee Role | Pipeline Resilience | QA Architecture | Data Ownership | Customization | Coverage & Scale | Partnership Model |

| Economic Buyer (CFO/COO) | 25% | 15% | 20% | 10% | 15% | 15% |

| Technical Evaluator (Eng Lead) | 25% | 25% | 10% | 15% | 20% | 5% |

| Legal/Compliance (Influencer) | 10% | 15% | 25% | 5% | 10% | 5% (+pass/fail compliance) |

| Primary Champion (Data/Product) | 15% | 20% | 15% | 20% | 10% | 20% |

| End User (Data Ops) | 10% | 20% | 5% | 15% | 25% | 25% |

The TCO Baseline

Compare total vendor cost against the in-house baseline, not against zero. Industry benchmarks put in-house extraction TCO at ~$250K in year 1, $300K in year 2, and $375K in year 3 for a 100-source pipeline, with 60–80% of data-engineering time consumed by maintenance. (Typical breakdown: 1.5 data engineers at $160K fully loaded = $240K; cloud infrastructure $10–30K/year; plus maintenance overhead. Your mileage varies significantly by source complexity and schema change frequency.) The vendor cost is the delta, not the absolute. Our Services vs In-House 2026 Budget Analysis has the full math. If you haven’t finalized the build-vs-buy decision, start with the Build or Buy Strategic Guide.

Why Feature-Comparison Scores Lie

Demo performance, reference-check responses, and vendor-provided proof points are systematically rosier than production reality. Ground-truth every score with a live test of your own data, including degraded documents, negotiated contracts, and amended source material. The delta between demo score and your-data score is where the real evaluation happens.

Worth knowing:

- Industry RFP evaluations typically weight capabilities 30–40%, cost 20–25%, security 15–20% (Responsive 2025). The seven-dimensional data-extraction matrix is a specialization of that pattern, not a replacement.

- Disagreements across committee roles are features, not bugs. They surface which dimensions matter most to whom.

How should I weight the seven dimensions in the scorecard?

By the buying committee’s role. Compute a weighted score per role, then reconcile. The final decision is either a weighted average across roles or an executive override grounded in strategic priority. Don’t average the dimensions equally. That hides where the committee actually disagrees.

When scores are tied: If the role-weighted scores across vendors differ by less than 5%, the feature comparison is not the deciding factor. The downside risk profile is. Ask: Which dimension, if it fails in year 2, would be hardest to recover from? For most enterprises running business-critical data pipelines, it’s either pipeline resilience (an unplanned engineering drain that compounds as sources change) or data ownership (a contractual gap that becomes a lock-in lever at renewal). Default to the dimension with the highest long-term downside for your specific use case. The vendor that scores higher on that dimension wins the tie.

How to Run the Evaluation — Process, Buying Committee, Timeline

Week 1–2: Compliance readiness screen. Before capability scoring, screen every vendor against Dimension 7. Any vendor failing SOC 2 Type II, GDPR support, or (where applicable) HIPAA is eliminated. EU AI Act Article 11 readiness is the new gate for AI-feeding extraction.

Week 2–4: Capability scoring on Dimensions 1–6. Apply the matrix. Score each remaining vendor 1–5 per dimension. Document the evidence for each score. Don’t rely on memory.

Week 3–5: Live test. Provide each vendor the same representative test set, including degraded scans, negotiated counterparty documents, and amended material. Run the test against production-complexity data, not vendor-provided samples.

Week 4–6: Reference checks. Focus on customers at your volume and vertical, not generic reference logos. Ask specifically about source change recovery, QA incident response, and contract renewal experience.

Week 5–7: Buying-committee review. Present weighted scores by committee role. Surface dimension disagreements explicitly. Use disagreements to sharpen weighting rather than paper them over.

Week 6–8: Contract negotiation. Data ownership, no-resell, exit clause, pipeline resilience SLA, and on-premise option if needed. Generic contract templates from procurement often miss these.

The Four Evaluation Failures

Avoid these common patterns: demo-only evaluation (no live testing); skipped compliance screening (reviewed at the contract stage instead of the screening stage); committee-by-committee discussion without weighted-dimension scoring (turning into personality-driven decisions); and TCO math that ignores the in-house baseline (making vendor costs look larger than they are).

For the list of companies you’d evaluate against this framework, see our 2026 Enterprise Web Scraping Companies Buyer’s Guide.

Worth knowing:

- Typical enterprise data-vendor evaluation runs 4–12 weeks end-to-end (Atlas 2026, Ivalua 2026). Accelerated evaluations under 4 weeks usually skip live testing, which is the highest-risk category of evaluation failure.

- EU AI Act Aug 2, 2026, enforcement creates urgency for compliance-first screening. Audit evidence has to exist at that date for high-risk AI extraction systems.

How long should the evaluation take?

Four to twelve weeks for a proper enterprise evaluation, depending on shortlist size and committee coordination. If timeline pressure is forcing under four weeks, escalate. The cost of the wrong vendor over a 2–3-year contract is measured in the hundreds of thousands, far more than the evaluation cycle saves.

Related Articles

- Top Enterprise Web Scraping Companies — 2026 Buyer’s Guide — The company list to run through this framework.

- Build or Buy? A Strategic Guide to Web Data Extraction — Decision framework for whether to evaluate vendors at all.

- 2026 Data Extraction Services vs In-House Teams Budget Analysis — The TCO math behind the in-house baseline.

- How Enterprise Web Data Extraction Systems Scale Reliably — A Pipeline Resilience Deep Dive.

- Human-in-the-Loop Data Extraction — QA architecture deep-dive.

Frequently Asked Questions

What should an enterprise evaluation checklist for data extraction companies include?

Seven data-extraction-specific dimensions: pipeline resilience, QA architecture, data ownership, customization depth, coverage breadth, partnership model, and compliance. Generic vendor-evaluation checklists collapse all 7 into a single “capabilities” category, which is why they produce inconclusive comparisons.

Who should be on the buying committee for a data extraction vendor?

Primary Champion (Head of Product or Data Strategy Lead), Economic Buyer (CFO, COO, or Business Unit CEO), Technical Evaluator (Engineering Lead or Data Engineering Manager), End User (Data Ops Manager or Analyst), and Influencer (Legal/Compliance). Each cares about different dimensions. Weight the scorecard accordingly rather than averaging equally.

How long does vendor evaluation typically take?

Four to twelve weeks for a proper enterprise evaluation. Accelerated evaluations under four weeks typically skip live testing, which is the highest-risk category of evaluation failure.

What’s the real cost of NOT doing a thorough evaluation?

The in-house alternative benchmarks at roughly $250K in year 1 and $375K in year 3 for a 100-source pipeline (industry analyses), with 60–80% of data-engineering time spent on maintenance. The wrong vendor choice over a 2–3 year contract routinely costs seven figures in direct spend, switching costs, and opportunity costs, far more than the evaluation effort itself.

How do I evaluate pipeline resilience before signing?

Request documented failure-recovery procedures, run a live test against your own degraded and amended data (not vendor-provided samples), require a contractual SLA on self-healing behavior, and reference-check customers specifically on source-change incidents.

What compliance requirements should I impose on a data extraction vendor in 2026?

SOC 2 Type II for access controls and audit logging. GDPR for data subject rights, including verifiable deletion. HIPAA for healthcare-adjacent data. EU AI Act Article 11 if extraction feeds high-risk AI deployed to EU users. Penalties for high-risk AI violations range from €15M to 3% of global turnover.

How should I weight the seven dimensions in the scorecard?

By the buying committee’s role. The Economic Buyer weighs pipeline resilience and data ownership highest. The Technical Evaluator weighs resilience, QA, and coverage. Legal/Compliance weighs compliance as pass/fail, plus data ownership. The Primary Champion weighs partnership, customization, and QA. Reconcile the role-weighted scores across the committee.

Sources

- IBM Institute for Business Value (2025): Cost of poor data quality — COO priorities and organizational loss data — ibm.com

- **Chad Sanderson, Data Contracts (O’Reilly, 2024):** Prevention vs. detection-based data quality — Data Products Substack

- Raconteur (2026): EU AI Act Compliance: A Technical Audit Guide for the 2026 Deadline — raconteur.net

- Mordor Intelligence: Web Scraping Market — managed services vs. software CAGR comparison — mordorintelligence.com

- ACC + Everlaw (2025): Generative AI’s Growing Strategic Value for Corporate Law Departments — GenAI adoption in corporate legal — everlaw.com

- Secure Privacy (2026): EU AI Act 2026 compliance requirements and penalty framework — secureprivacy.ai

- Jones Walker LLP (2026): Ten AI Predictions for 2026 — legal ops and enterprise AI governance — joneswalker.com

- Responsive (2025): RFP Evaluation Criteria — enterprise vendor-evaluation weighting benchmarks — responsive.io

- Atlas (2026): Vendor Selection Process — enterprise evaluation timeline benchmarks — atlassystems.com

- Ivalua (2026): Vendor Selection Process — enterprise evaluation methodology — ivalua.com