A pipeline returns 200 OK on every request. The extraction logs say “success.” The dashboard reports that the data is up to date. And the numbers are wrong.

Not subtly wrong — wrong by a margin that moves a pricing decision, distorts a model evaluation, or breaks a downstream join. The kind of wrong that nobody catches for two weeks, until somebody notices an outlier and traces it back. By then, decisions have been made on bad data.

This is the failure mode that pushes teams from “we have scrapers” to “we have a custom pipeline.” It is rarely about scale, and almost never about cost. It is about whether the data can be trusted when it lands in production.

As of 2026, the operational regime has shifted enough that this distinction matters more than it did 18 months ago. Cloudflare‘s 2024-2025 anti-bot iterations changed the economics of proxying. Schema-drift tolerances have tightened as more pipelines feed AI grounding. Procurement teams now ask vendors for per-record audit trails. Custom web scraping has stopped being a nice-to-have for sufficiently complex pipelines — it is the operational shape any team running production extraction beyond a handful of sources will eventually need.

This article walks through the tools-vs-pipelines question, defines what makes scraping “custom” (it is not what you think), maps the production architecture, and gives you a self-assessment framework — SHIFT — for deciding when custom is no longer optional.

Quick Digest

- The four properties that make a scraping operation production-grade: per-source adaptation, schema enforcement at write, embedded QA, and audit-trail per record.

- The five-stage pipeline architecture (Source → Extraction → Transformation → QA → Delivery) and where it most commonly fails.

- The SHIFT self-assessment: Scale, Heterogeneity, Impact, Freshness, Trust — score 3+, and tools have stopped working for you.

- Build vs Buy vs Hybrid economics, with the pivot point most production teams hit.

- Compliance posture as a procurement gate (audit trails, jurisdiction-aware policies, robots.txt as norm, not contract).

The Tools-vs-Pipelines Question

Most teams start with a tool. There are good reasons. Off-the-shelf scrapers are cheap, fast to deploy, and work fine for prototypes — a few sources, a manual schema, and a weekly cadence. The default first answer to “how do we get this data?” is reasonable.

The default stops working past a recognizable threshold. The threshold is not “more sources” in the abstract. It is when adding source N+1 that you are forced to refactor source 1. That moment usually comes between 30 and 60 sources for non-trivial extraction targets, sooner if the targets include heterogeneous regions, JavaScript-heavy pages, or authentication. After that, the tool starts to feel like glue holding together exceptions, and the team’s weekly standup is dominated by “the scraper for X is broken again.”

What you are running into is not a tool-quality problem. It is a category mismatch. A tool optimizes for self-service speed; a pipeline optimizes for reliability. Different jobs.

A custom pipeline replaces specific operational shapes — the kind of work that grows non-linearly with source count and is unrewarding to staff. Selector maintenance after layout changes. Proxy rotation strategy. Schema versioning that survives a feed contract. Anomaly detection on output distributions. Provenance capture per record. None of these is about “writing better code.” They are about treating extraction as governed infrastructure with the same expectations as any other production system — the discipline that separates ad-hoc scripts from genuine data collection automation at production scale.

If a team has been running scrapers in-house for over a year and is talking more about reliability than about new sources, they are in this category mismatch. The path forward is either to invest in the operational discipline custom pipelines require or to delegate it to a partner whose operational shape is already tuned for it. The economics of that choice come up in H2-5. For the prerequisite landscape, our web data extraction techniques and applications guide covers the foundational tooling layer that this article assumes.

Expert Insight: The transition from tools to a pipeline is rarely planned. It happens after the second silent-failure incident in a quarter, when leadership starts asking pointed questions and the team realizes the existing setup cannot answer them.

Quick Summary — When does a tool stop being enough? When adding source N+1 forces you to refactor source 1. For most teams, that is between 30-60 sources, sooner with heterogeneous targets.

What Makes Web Scraping “Custom”?

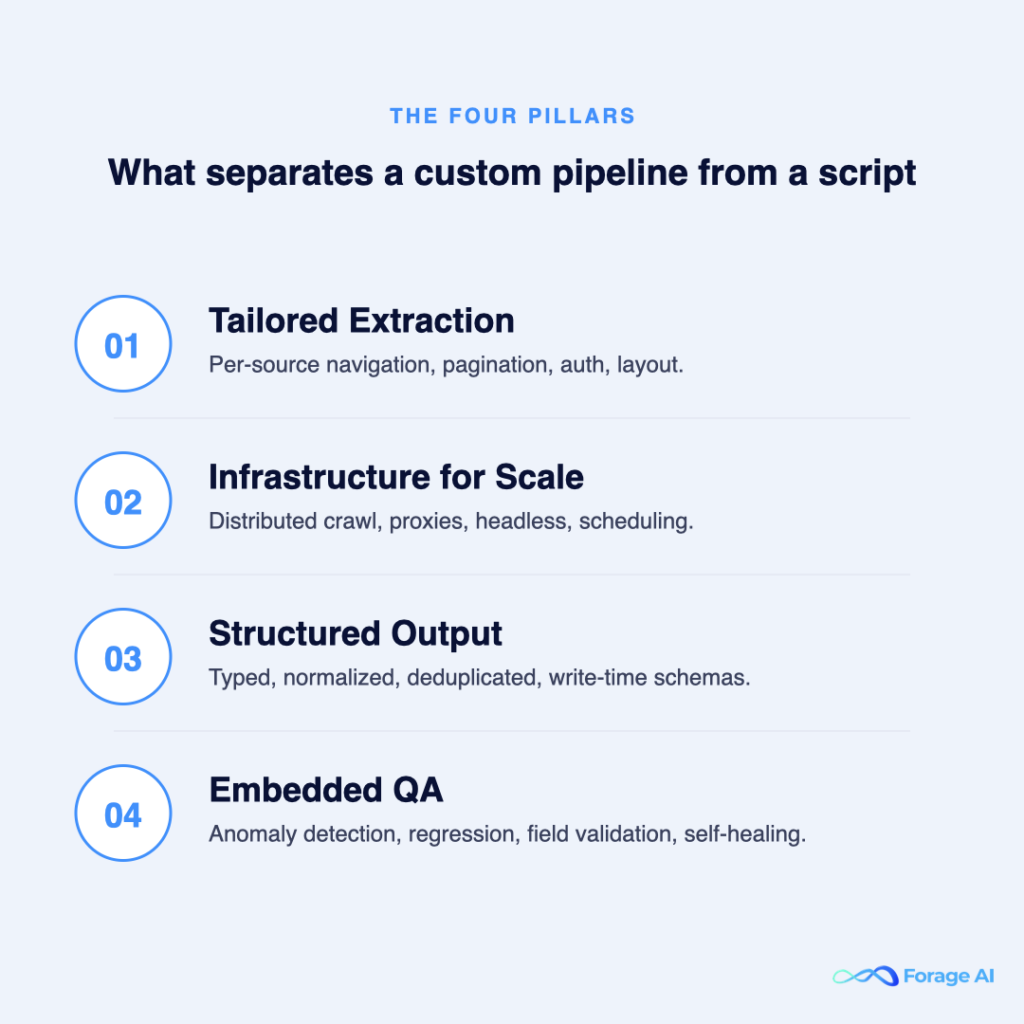

“Custom web scraping” is mostly used in the SERP to mean “code you write yourself instead of using a pre-built tool.” That definition is too narrow. Code is the easy part. What makes a pipeline custom — production-grade, defensible, durable — is four properties operating together.

Per-Source Adaptation

Each source has its own logic surface. Pagination is a page-cursor for paginated catalogs, infinite-scroll requiring scroll-event orchestration for SPAs, and offset-based for legacy systems. Authentication ranges from session cookies to OAuth refresh-token rotation to per-request HMAC signing. JavaScript rendering varies — some pages need a full headless browser; others can be parsed from initial HTML if you know what you are doing. Generic tools apply one pattern to all sources. Custom pipelines pick the right pattern per source, usually with a per-source-adapter abstraction so the rest of the pipeline doesn’t care.

Schema Enforcement at Write

The biggest source of silent corruption is fields that mutate without anyone noticing — a price field that suddenly carries a currency prefix, a date that shifts from ISO to localized format, a category field that picks up a new enum value. Schema enforcement at write — not at read — catches drift at the boundary. Typed fields with explicit cast rules. Idempotent writes keyed by a deduplication signature. Versioned schemas so a downstream consumer doesn’t break when an upstream source mutates. The pattern that survives the warehouse: source-adapter normalizes to a versioned target schema; the target schema is the contract; consumers join against the contract, not the source. Schema versioning at the source-adapter layer is the highest-leverage discipline most in-house teams skip.

Embedded QA

Validation is not a step you run after extraction. It is interleaved through the pipeline. Anomaly detection at the row level (sudden distribution shifts in a numeric field, missing-value spikes, format drift). Regression suites against historical baselines (a small reference fixture is replayed in each run, asserting the expected output). Field-level validation with type and range constraints. Self-healing logic where possible — known selector patterns retried against a small corpus of fallback selectors before declaring failure. Quality is a property of the pipeline, not a stage.

Audit Trail per Record

Every record should carry its own provenance: source URL, fetch timestamp, access method (proxy tier, headers, retry count), and a content hash. This is not optional for any team that talks to a procurement-side legal review. The 2026 enterprise-buyer questionnaire asks whether the vendor can, on demand, produce the source URL and timestamp for any extracted data point that ends up in a regulated decision. Pipelines without audit infrastructure fail this question in the first round, and the deal stalls.

Expert Insight: The four properties are not independent — they reinforce each other. Schema enforcement without audit trail produces correct-shaped data with no defensible provenance. Audit trail without QA produces auditable garbage. The teams that get production-grade extraction right run all four as a single system.

Quick Summary — What makes scraping “custom”? Four properties operating together: per-source adaptation, schema enforcement at write, embedded QA, and audit trail per record. Code is the easy part.

The Five-Stage Architecture

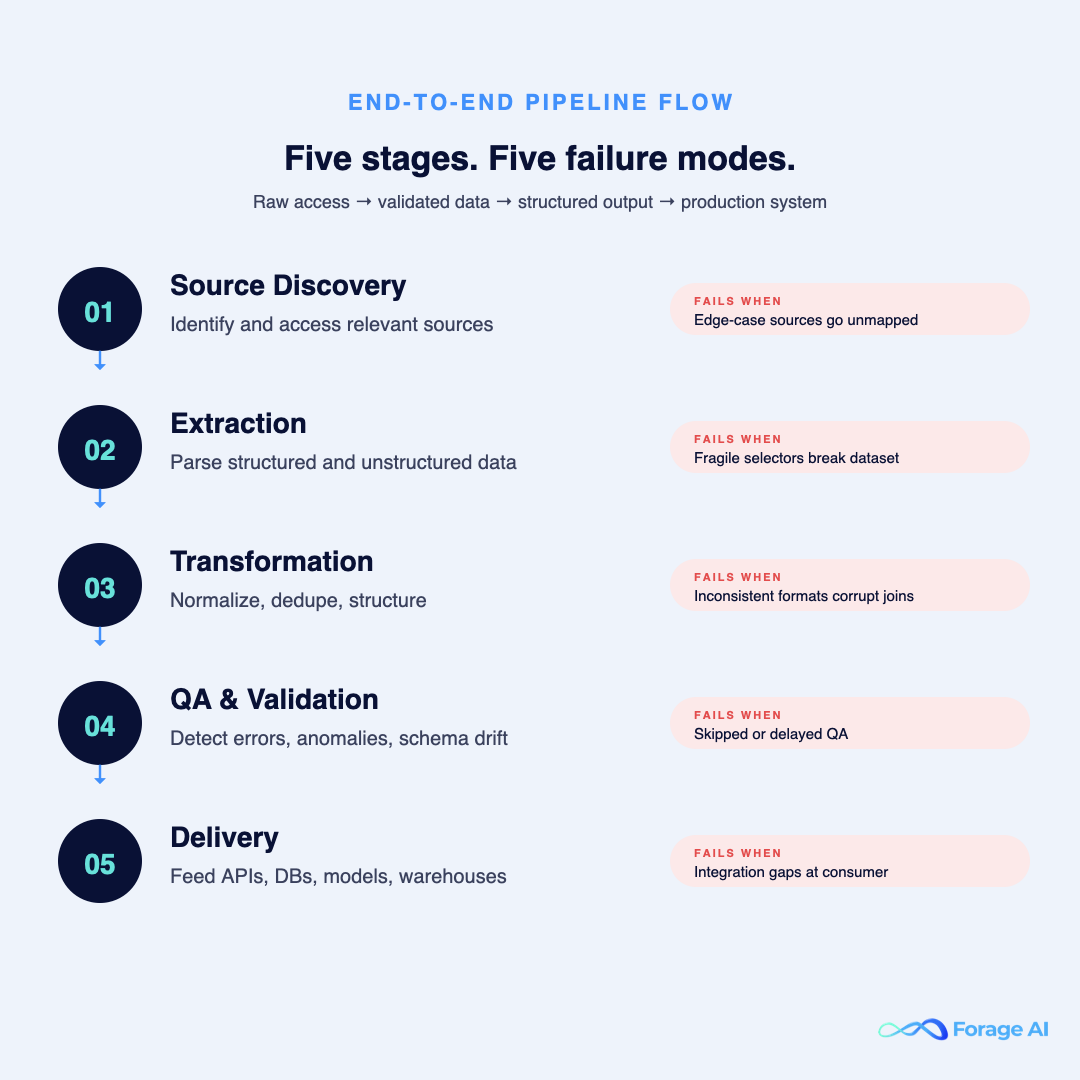

A custom pipeline reads as five stages, and where it fails is where the architecture is incomplete.

| Stage | What it does | Common failure mode |

|---|---|---|

| Source Discovery | Identify and access relevant sources | Missing edge-case sources (regional variants, paywalled, robots-restricted) |

| Extraction | Parse structured / unstructured data | Selector fragility, anti-bot escalation |

| Transformation | Normalize and structure data | Schema drift, idempotency gaps |

| QA & Validation | Detect errors and anomalies | Skipped, delayed, or alerts that don’t fire on the right signals |

| Delivery | Feed into systems (API/DB/warehouse) | Integration boundary failures, retry semantics gaps |

Source Discovery is where most edge-case data loss happens. Production gaps trace to a forgotten 5% of sources, not the obvious 95%. Regional variants of marketplace websites, paywalled versus public versions, robots-restricted endpoints — easy to overlook in scoping, expensive to back-fill later.

Extraction is where the anti-bot strategy lives. Proxy rotation, fingerprint randomization, headless browser variant selection, residential vs. ISP-tier mix. A pipeline that worked last quarter against a target may fail this quarter against the same target — Cloudflare’s bot management iterations have shifted proxy economics decisively in the last 18 months. Datacenter proxies that returned high success rates on top-1000 websites in 2023 now return materially lower rates on the same targets; residential and ISP-tier proxies hold acceptable rates but at a markedly higher per-request cost. The technical implication: proxy strategy is now a budget conversation as much as an architectural one. For operators who want depth here, our proxy guide for AI data extraction covers the tradeoffs.

Transformation is where idempotent normalization and write-time schema enforcement live. The most common failure here isn’t bad logic — it is logic that wasn’t versioned, so a schema change in one source silently corrupts joins in the warehouse. Source adapters should expose a target-schema version explicitly; consumers pin to a version; breaking changes get a new version, not a mutation.

QA & Validation is where the alerts matter. The wrong alert is “the pipeline failed.” The right alert is “the pipeline succeeded but the field-level distribution shifted by more than X%, the row count dropped by more than Y%, or the freshness lag exceeded the SLO.” That last category — succeeded but shifted — is where silent failures hide.

Delivery is where API contract stability matters. Idempotency at the consumer is non-negotiable. Batch vs streaming changes the failure mode (batch retries are easy, streaming retries require windowed semantics). Replay support — the ability to reconstruct the same delivery for a past time window — is what saves you when downstream systems detect corruption days later.

Expert Insight: The QA stage is where operational discipline pays compounding dividends. Teams that invest there catch silent failures before they propagate; teams that skip it discover failures via downstream business impact, weeks later. The cost of fixing a silent failure scales with how long it has been corrupting decisions.

Quick Summary — What stages does a custom pipeline have? Five — Source Discovery, Extraction, Transformation, QA, Delivery. Most failure modes live at QA; most cost overruns live at Extraction (proxy strategy).

When Is Custom Required? The SHIFT Self-Assessment

Most teams misdiagnose scraping problems as technical issues. They are not. They are system-maturity issues.

The SHIFT Letters

- S | Scale. Sources × records, but more importantly, the moment when adding source N+1 forces you to refactor source 1. For most operational teams, this is 30-60 sources or 100K+ records per day.

- H | Heterogeneity. Different structures, formats, geographies. E-commerce data from the US, EU, and APAC marketplaces — each with different JavaScript frameworks, currency rules, and locale parsing. Heterogeneity kills generic tooling first, usually before raw scale does.

- I | Impact. Does the data feed a production decision? A pricing engine fed by competitor data has a 1:1 mapping between data error and revenue at risk. Impact converts data quality from a hygiene metric to a P&L metric.

- F | Freshness. Daily is the inflection. Hourly forces architectural change (you can’t brute-force it with overnight batches anymore). Real-time turn extraction is formulated as a streaming problem with windowed semantics.

- T | Trust. Regulated data, customer-facing surfaces, audit-trailed extractions. Trust is where governance lives, and it is the dimension teams underestimate most consistently.

If you score high on three or more letters, custom pipelines are not optional.

A Worked Example

A B2B SaaS firm extracts competitor pricing data from 60+ sources, refreshed every 4 hours, feeding both a customer-facing dashboard and an internal pricing model. They score: Scale high (60+ sources), Heterogeneity high (mixed regional marketplaces), Impact high (drives revenue), Freshness high (sub-daily), Trust medium-high (dashboard accuracy is contractually committed). Five out of five lean. Custom is the only viable answer; the only remaining question is whether to build or partner.

What If You Score Low?

If the score is 1 or 2, a tool is probably the right answer. The cost of running custom infrastructure for a small, stable, low-impact extraction operation is genuinely higher than the cost of a tool subscription plus weekly babysitting. Don’t over-engineer.

Expert Insight: Teams typically score themselves correctly on Scale and Heterogeneity. They underestimate Trust until an SLA breach makes it visible. The screening question that exposes the underestimation: “what does an audit of this data require, and can we produce it within 72 hours?”

Quick Summary — How do you know it’s time? Score the SHIFT letters honestly. Three or more high → tools have stopped working for you, even if the team hasn’t admitted it yet.

Build vs Buy vs Hybrid (the honest economics)

Once a team accepts that a custom solution is required, the next question is who will build and operate it. Three realistic paths.

Build path

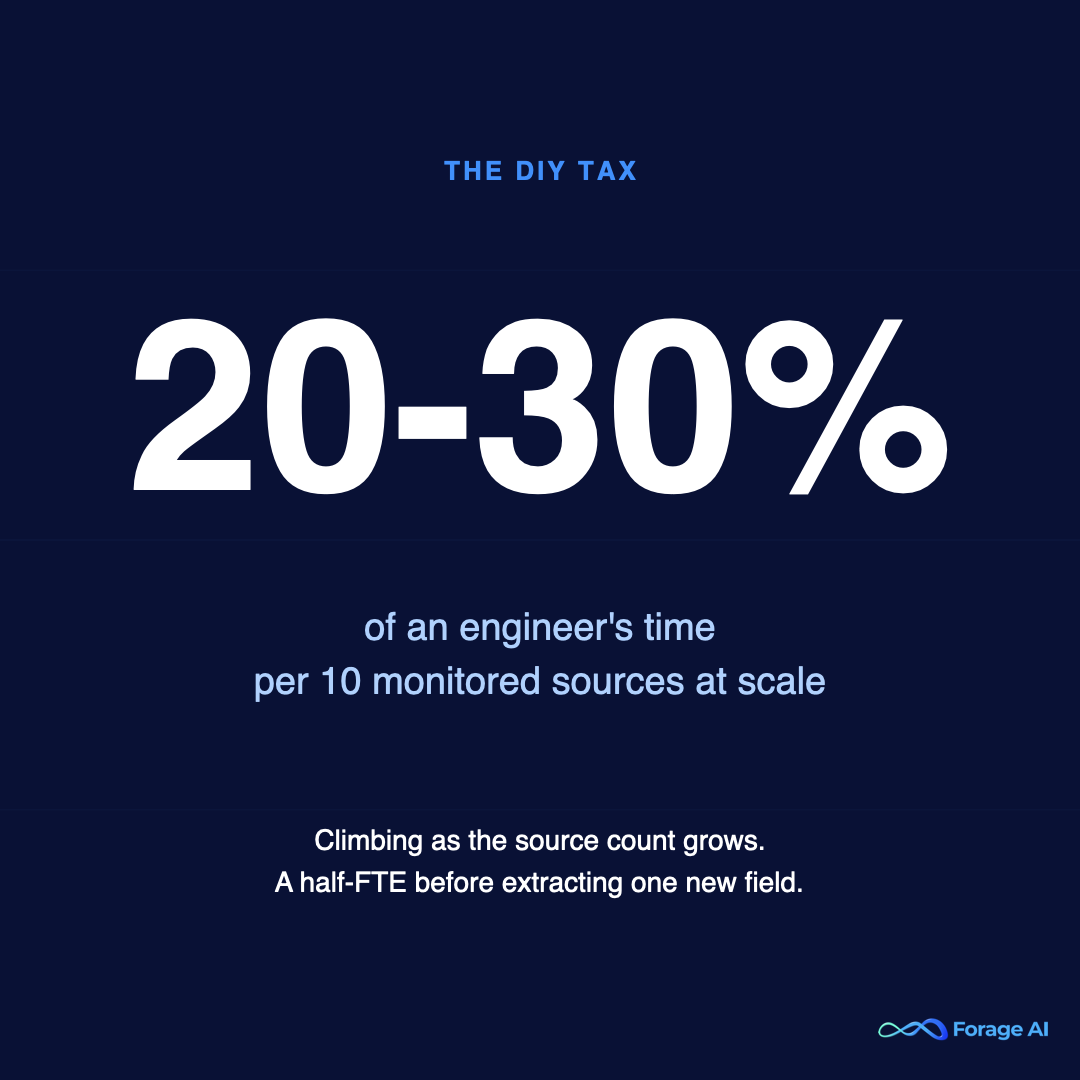

In-house from scratch. 6-12 months to production-grade for a non-trivial source count. Requires dedicated engineering, plus operations and legal capacity. The maintenance load grows with source count—a real fraction of senior-engineer time per N monitored sources at scale, rising as the count grows. The honest version of the build path is that the work is unrewarding, senior engineers churn out of it, and hiring backfills are slow in the 2026 market.

Buy path

Managed external data infrastructure. 2-4 weeks to reliable data delivery for a partner whose infrastructure already exists; only source-specific configuration is bespoke. Higher per-source unit cost. Lower total cost of ownership at scale because the infrastructure, the proxy economics, the QA layer, the legal review, and the on-call rotation are all absorbed. This is where solutions like Forage AI’s custom web data extraction sit — the four properties from the previous section are already engineered into the pipeline shape, so internal teams focus on using the data, not maintaining infrastructure. For a broader view of what managed data extraction services look like across the category in 2026, the pillar guide maps the landscape end-to-end. For the deeper buyer-evaluation framework, our build-vs-buy guide covers the full TCO model.

Hybrid path

A tool for prototype and exploration; custom (build or buy) for production. Most non-trivial production teams converge on this shape over 12-18 months. The tool remains useful for ad hoc, one-off extractions; the production pipeline handles everything that requires reliability — the automated data extraction layer that scheduled jobs, retries, and downstream consumers depend on. The mistake to avoid is staying on tools longer than the SHIFT score warrants — the second silent-failure incident is the moment to commit, not the moment to investigate.

The Pivot Point

The pivot from build to buy rarely happens at planning time. It happens in retrospect — usually after the second silent-failure incident, or after the procurement team flags audit-trail gaps in a deal. The economic argument for switching exists long before the trigger event; the political will to switch arrives only after. If you are reading this preemptively, consider yourself ahead of the curve. For the regret-driven version of this story, why product teams regret building automated web scraping in-house is worth a read.

Expert Insight: The clearest signal that a team has crossed from “scraping is a project” to “external data is infrastructure” is when a new business question gets answered by adding a source to the existing pipeline in days, not by starting a new initiative. That latency — question to grounded answer — is the metric data leaders should track for their AI program.

Quick Summary — Build, buy, or hybrid? Build only where source coverage is so narrow and so strategic that owning it confers an advantage. Buy everywhere else. Hybrid is where most production teams end up; the question is when to commit, not whether.

The Compliance Angle Most Teams Underestimate

Production-scale custom scraping runs through a regulatory landscape that has tightened meaningfully since 2024. GDPR, CCPA/CPRA, jurisdictional interpretations of the Computer Fraud and Abuse Act, and a growing body of court precedent on scraping public data — these have pushed compliance from a peripheral concern to a procurement-gating one.

The mechanics matter. robots.txt is a norm, not a binding contract — but it is increasingly treated as a starting point by courts and counterparties. Terms-of-Service compliance varies wildly by source. Public-data extraction has supportive precedent in the United States but contested precedent in the EU. None of these lives in a stable equilibrium; legal posture must be jurisdiction-aware and revisited as case law shifts.

Custom pipelines handle compliance differently from generic tools. Audit trails are first-class. IP rotation policies are documented and source-aware. Source provenance is captured per record (URL, timestamp, access method), so any extracted data point can be traced back. Take-down and opt-out plumbing exists at the consumer side. These properties are not optional for production extraction in 2026 — they are what passes a procurement legal review.

When evaluating a partner for custom extraction, the compliance questions to surface are: how is source provenance documented and retained? What is the legal review process for new source onboarding? How are interpretations of robots.txt and ToS handled by the jurisdiction? What is the take-down SLA? Our legal and ethical web scraping guide covers this terrain in more depth.

This article is for informational purposes only and does not constitute legal advice. Consult qualified counsel for compliance specific to your jurisdiction and source categories.

Expert Insight: Compliance underestimation is a procurement risk, not a technical risk. The pipelines that pass legal review close deals faster and avoid the failed-pilot pattern where a working extraction can’t graduate to production because it can’t pass an audit.

Quick Summary — What is the compliance posture for custom web scraping? Defensible with audit trails, source provenance, ToS-aware extraction, and jurisdiction-aware policies. Without those, the technical pipeline becomes a procurement blocker.

Frequently Asked Questions

What is the difference between custom web scraping and using a scraping tool?

A tool handles extraction. Custom web scraping is a production-grade pipeline that handles extraction plus per-source adaptation, schema enforcement, embedded QA, and audit-trail provenance. The difference is not feature-by-feature; it is whether the data is governed.

How long does a custom web scraping pipeline take to build?

In-house builds typically reach production-grade reliability for a non-trivial source count in 6-12 months. Managed-partner deployments typically reach reliable data delivery in 2-4 weeks because the infrastructure already exists; only source-specific configuration is bespoke. [SME REVIEW: typical pilot-to-production timeline across Forage’s customer base?]

Is custom web scraping legal?

When sourced from public data, with robots. txt-aware policies, ToS-aligned access patterns, and audit-ready provenance documentation, custom web scraping is generally defensible in most jurisdictions. The legal posture varies by source category, jurisdiction, and intended use. Consult qualified counsel for your specific situation.

How much does custom web scraping cost?

In-house total cost of ownership is highest: multi-engineer commitment plus proxy and infrastructure spend, both of which climb with source count. Off-the-shelf tools are lowest in subscription cost but highest in operational burden at scale. Managed partners sit in the middle with predictable pricing tied to source count and freshness. Order-of-magnitude differences exist; specific numbers depend heavily on scope.

Can a small data team operate custom scraping at scale?

Yes — if the team chooses the buy or hybrid path. The minimum viable internal team for managed-service-led scraping is one or two people who own data integration and consumer-side reliability; the partner absorbs extraction, QA, and infrastructure. Pure build at scale requires more.

Conclusion

Custom web scraping is not a tooling upgrade. It is a shift in how a team treats data — from output to operate, from a script to a pipeline, from a project to infrastructure.

Generic tools work when data is optional. They fail when data becomes foundational. The transition is not from simple to complex. It is from fragile systems to reliable ones.

That transition happens whether a team plans for it or not. The question is whether it happens proactively, before silent failures corrupt downstream decisions, or reactively, after the second incident in a quarter forces it. The teams that move proactively spend the next quarter shipping new capabilities. The teams that move reactively spend it cleaning up.

If the SHIFT score has been ticking up — sources growing, freshness tightening, audit demands arriving — the work to get ahead of the next failure starts now, not after.

About the author: The Forage AI team writes about how enterprise data teams source, structure, and govern external data that their AI systems and analytics depend on. Forage AI builds managed external data infrastructure for organizations running large-scale extraction programs. Learn more at forage.ai.

Sources

- Cloudflare bot management public timeline (2024-2025 anti-bot iterations).

- Forage AI internal observations across managed customer accounts (2025-2026).

- Industry analyst directional reads on managed external data services (Gartner, IDC, Forrester). Consult current sector reports for specific market-sizing.

- Public legal commentary on US/EU web-scraping case law.

Related Articles

- Automated Data Collection — the pillar on how data collection automation is architected end-to-end as governed infrastructure.

- Data Extraction Automation — the pillar on how automated data extraction pipelines are architected and operated.

- A Guide To Modern Data Extraction Services in 2026 — the category-level pillar covering managed data extraction services end-to-end.

- Why Product Teams Regret Building Automated Web Scraping In-House — the regret-driven counterpart to this primer.

- Build or Buy: A Strategic Guide to Web Data Extraction in 2025 — the deeper buyer-evaluation framework.

- Top Proxies for AI Data Extraction — depth on the extraction stage’s anti-bot layer.